-

Notifications

You must be signed in to change notification settings - Fork 19

InteractingWithTheTransport

C++ Guide Series

Architecture | Knowledge Base | Networking | Containers | Threads | Optimizations | KaRL | Encryption | Checkpointing | Knowledge Performance | Logging

The main entry point into the MADARA transport layer is the QoSTransportSettings class. The QoSTransportSettings class contains dozens of network-specific settings that govern basic configuration, quality-of-service, and filtering.

- MADARA Transport Layer

- Table of Contents

- Understanding the QoS Transport Settings class

- Understanding When Knowledge is Sent Over the Network

- More Information

Creating a networked transport in MADARA generally requires editing QoSTransportSettings.type (which specifies the network transport type) and a list of hosts (for UDP, Multicast, Broadcast) or domains (map to topics in DDS transports).

UDP requires a hosts vector with the ip:port of the sender first (where we want to bind and how we want to be known to others in the originator field of messages) and all peers in the rest of the vector. Multicast requires a valid multicast ip and port in the first entry of the hosts vector. Similarly, broadcast requires a valid broadcast ip (for the subnet of your machine) and port in the first entry of the hosts vector.

#include "madara/knowledge/KnowledgeBase.h"

int main (int argc, char ** argv)

{

// setup a UDP transport with ourself at port 45000 and a

// second agent at port 45001 on localhost (127.0.0.1)

madara::transport::QoSTransportSettings settings;

settings.hosts.push_back ("127.0.0.1:45000");

settings.hosts.push_back ("127.0.0.1:45001");

settings.type = madara::transport::UDP;

// use the transport constructor

madara::knowledge::KnowledgeBase knowledge ("", settings);

// set our id to 0 and let the other agent know that we are ready

knowledge.set (".id", madara::knowledge::KnowledgeRecord::Integer (0));

knowledge.set ("agent{.id}.ready");

return 0;

}

UDP Registry is a special UDP transport that dynamically recognizes new and leaving hosts after deployment. To use the UDP Registry transport, you need to have a madara_registry running (located at $MADARA_ROOT/bin/madara_registry). This UDP registry should work over 3G, 4G, and from behind NATs as long as the NAT maintains the UDP host:port for the madara_registry binding.

If the madara_registry goes offline, the agents that have talked with the madara_registry before will continue to use the last hosts they knew of. Whenever a madara_registry comes back online (e.g., when you restart it), everything should work without any issues.

#include "madara/knowledge/KnowledgeBase.h"

int main (int argc, char ** argv)

{

// setup a UDP transport with ourself at port 45000 and a

// second agent at port 45001 on localhost (127.0.0.1)

madara::transport::QoSTransportSettings settings;

// assume the madara_registry is bound to 127.0.0.1:45000

settings.hosts.push_back ("127.0.0.1:45000");

settings.type = madara::transport::REGISTRY_CLIENT;

// to keep things clean, use the Endpoint clear filter

filters::EndpointClear endpointclear (

"domain." + settings.domains + ".endpoints");

// add the filter for processing receive messages

settings.add_receive_filter (&endpointclear);

// use the transport constructor

madara::knowledge::KnowledgeBase knowledge ("", settings);

// set our id to 0 and let the other agent know that we are ready

knowledge.set (".id", madara::knowledge::KnowledgeRecord::Integer (0));

knowledge.set ("agent{.id}.ready");

return 0;

}

Multicast is a one-to-many or many-to-many transport protocol that allows hosts to subscribe to ips as topics. The multicast standard guarantees copies of all messages are delivered to each subscriber, if the connection/subscription is available. This allows for it be used in intrahost and interhost situations.

You can find the acceptable multicast IP range that you can use here:

#include "madara/knowledge/Knowledge_Base.h"

int main (int argc, char ** argv)

{

// setup a Multicast transport at 239.255.0.1:4150

madara::transport::QoSTransportSettings settings;

settings.hosts.push_back ("239.255.0.1:4150");

settings.type = Madara::Transport::MULTICAST;

// use the transport constructor

madara::knowledge::KnowledgeBase knowledge ("", settings);

// set our id to 0 and let the other agent know that we are ready

knowledge.set (".id", madara::knowledge::Knowledge_Record::Integer (0));

knowledge.set ("agent{.id}.ready");

return 0;

}

Broadcast is a one-to-many or many-to-many transport protocol that allows hosts to signal all hosts on an IP subnet of UDP datagrams. UDP broadcast does not guarantee that all agents on a host get a copy of UDP datagrams sent to the broadcast IP. Instead, the first subscriber takes the message from the OS, and the message is deleted. This means broadcast is not ideal for intrahost communication amongst co-located processes. It is still very useful for interhost communication between two hosts/systems and is widely supported on most routers.

#include "madara/knowledge/KnowledgeBase.h"

int main (int argc, char ** argv)

{

// setup a Broadcast transport at "192.168.1.255:15000"

// The broadcast ip needs to be appropriate for your IP subnet mask

madara::transport::QoSTransportSettings settings;

settings.hosts.push_back ("192.168.1.255:15000");

settings.type = Madara::Transport::BROADCAST;

// use the transport constructor

madara::knowledge::KnowledgeBase knowledge ("", settings);

// set our id to 0 and let the other agent know that we are ready

knowledge.set (".id", madara::knowledge::KnowledgeRecord::Integer (0));

knowledge.set ("agent{.id}.ready");

return 0;

}

ZeroMQ is a transport maintained by the open source community for high-performance, low-latency, highly-connected applications. It is especially high performance on intrahost communication, and tends to be at least as fast as IPC mechanisms for communication between colocated processes on the same machine. ZeroMQ is not really meant for outdoor, edge environments and was really created for applications like stock exchanges where communication is highly available.

#include "madara/knowledge/KnowledgeBase.h"

int main (int argc, char ** argv)

{

// setup a reliable TCP-based ZMQ transport

madara::transport::QoSTransportSettings settings;

settings.type = madara::transport::ZMQ;

settings.hosts.push_back ("tcp://127.0.0.1:30001");

settings.hosts.push_back ("tcp://127.0.0.1:30000");

// use the transport constructor

madara::knowledge::KnowledgeBase knowledge ("", settings);

// set our id to 0 and let the other agent know that we are ready

knowledge.set (".id", 0);

knowledge.set ("agent{.id}.ready");

return 0;

}

ZeroMQ also includes an interprocess communication implementation for shared memory within a host. This can be used by developers when a transport should not be exposed to external hosts and is only expected to be used between processes on the same machine.

#include "madara/knowledge/KnowledgeBase.h"

int main (int argc, char ** argv)

{

// setup a reliable IPC-based ZMQ transport

madara::transport::QoSTransportSettings settings;

settings.type = madara::transport::ZMQ;

settings.hosts.push_back ("ipc:///tmp/my_file_0");

settings.hosts.push_back ("ipc:///tmp/my_file_1");

// use the transport constructor

madara::knowledge::KnowledgeBase knowledge ("", settings);

// set our id to 0 and let the other agent know that we are ready

knowledge.set (".id", 0);

knowledge.set ("agent{.id}.ready");

return 0;

}

In order to the use the Open Splice transport, you have to install Open Splice DDS. There are instructions on how to do this on the [InstallationFromSource] wiki page.

Example of Open Splice DDS transport constructor

#include "madara/knowledge/KnowledgeBase.h"

int main (int argc, char ** argv)

{

// setup a reliable Open Splice DDS transport

madara::transport::QoSTransportSettings settings;

settings.type = madara::transport::SPLICE;

settings.reliability = madara::transport::RELIABLE;

// use the transport constructor

madara::knowledge::KnowledgeBase knowledge ("", settings);

// set our id to 0 and let the other agent know that we are ready

knowledge.set (".id", madara::knowledge::KnowledgeRecord::Integer (0));

knowledge.set ("agent{.id}.ready");

return 0;

}

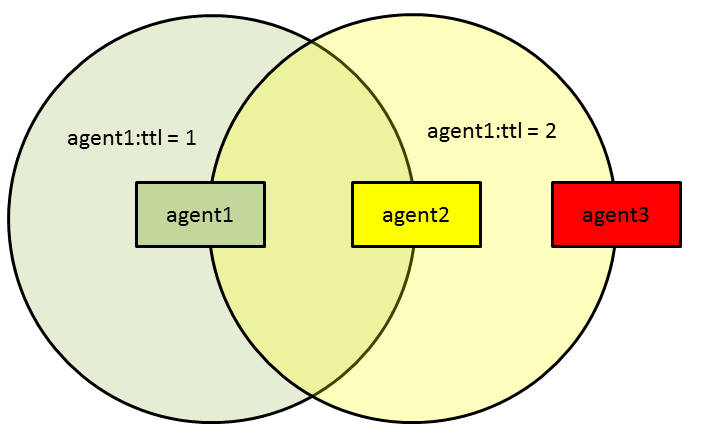

When using real robots in a wide area, it may not be possible to reach all neighbors in a single message send. To aid developers trying to solve problems where multi-hops are required for message routing, MADARA supports rebroadcast TTLs set on the sender side. MADARA also supports opting out of rebroadcasts. In fact, all transports opt out by default.

Example routing between agent1 and agent3 that is not possible without rebroadcasts

Example of sending a packet with a rebroadcast ttl of 3

// set rebroadcast ttl to 3.

madara::transport::QoSTransportSettings settings;

settings.set_rebroadcast_ttl (3);

madara::knowledge::KnowledgeBase knowledge ("", settings);

Example of enabling participation in rebroadcasts

// enable participation in rebroadcasts up to 255 ttl (default)

// Can adjust this downward by passing a parameter between 0 and 255

madara::transport::QoSTransportSettings settings;

settings.enable_participant_ttl ();

// Our own packets will have a ttl of 2

settings.set_rebroadcast_ttl (2);

madara::knowledge::KnowledgeBase knowledge ("", settings);

The MADARA architecture monitors two different types of bandwidth usage: sending and receiving bandwidth. Setting a limit for either of these will serve as a hard limit that does not differentiate between priorities of information. Once a packet is sent, the bandwidth counters are updated, and if the bytes per second rate is higher than the limit you set, all packets will be dropped until the rate dips below the limit. These limiters do not currently look at the size of the packet that is being sent out, so if you are at 99,500 B/s and your limit is 100,000 B/s, it will try to send any next packet (even if it 1MB), update the bandwidth counter, and then not send another packet until you reach 99,500 B/s sent over the past 10s.

For a more flexible bandwidth option that you can configure, see Bandwidth Filters.

Deadline enforcement is concerned with enforcing latency deadlines between reasoning entitites on the network. Deadline enforcement requires some type of time synchronization protocol between agents in the network, preferably accurate to within a second, for it to be useful to the MADARA entities.

Enforcing send bandwidth limits

This type of enforcement is useful if you want to make sure no agent is using more than a certain limit (e.g., 100KB/s) individually.

Example of enforcing a send bandwidth limit

#include "madara/knowledge/KnowledgeBase.h"

int main (int argc, char ** argv)

{

// setup a Multicast transport at 239.255.0.1:4150

madara::transport::QoSTransportSettings settings;

settings.hosts.push_back ("239.255.0.1:4150");

settings.type = madara::transport::MULTICAST;

// set the send bandwidth limit to 100,000 bytes per second.

settings.set_send_bandwidth_limit (100000);

...

return 0;

}

Enforcing send bandwidth limits based on received bandwidth

This type of enforcement is useful if you want to make sure the agent is not violating a collective bandwidth limit (e.g., 2MB/s) and is based on the amount of data received per second over the past 10s.

Example of enforcing a total bandwidth limit

#include "madara/knowledge/KnowledgeBase.h"

int main (int argc, char ** argv)

{

// setup a Multicast transport at 239.255.0.1:4150

madara::transport::QoSTransportSettings settings;

settings.hosts.push_back ("239.255.0.1:4150");

settings.type = madara::transport::MULTICAST;

// set the total bandwidth limit to 100,000 bytes per second.

settings.set_total_bandwidth_limit (100000);

...

return 0;

}

Note that even though this is keying off received bandwidth, it affects messages being sent (i.e., enforcement is applied when the agent attempts to send or rebroadcast knowledge to the network).

Deadline enforcement aims to discard received packets that are too old to be useful to agent state reasoning. Handling old packets can significantly impede performance in an agent-saturated network, so clearing your queues quickly can aid the agent network.

Example of enforcing a transport latency deadline

#include "madara/knowledge/KnowledgeBase.h"

int main (int argc, char ** argv)

{

// setup a Multicast transport at 239.255.0.1:4150

madara::transport::QoSTransportSettings settings;

settings.hosts.push_back ("239.255.0.1:4150");

settings.type = madara::transport::MULTICAST;

// set the deadline to 10 seconds

settings.set_deadline (10);

...

return 0;

}

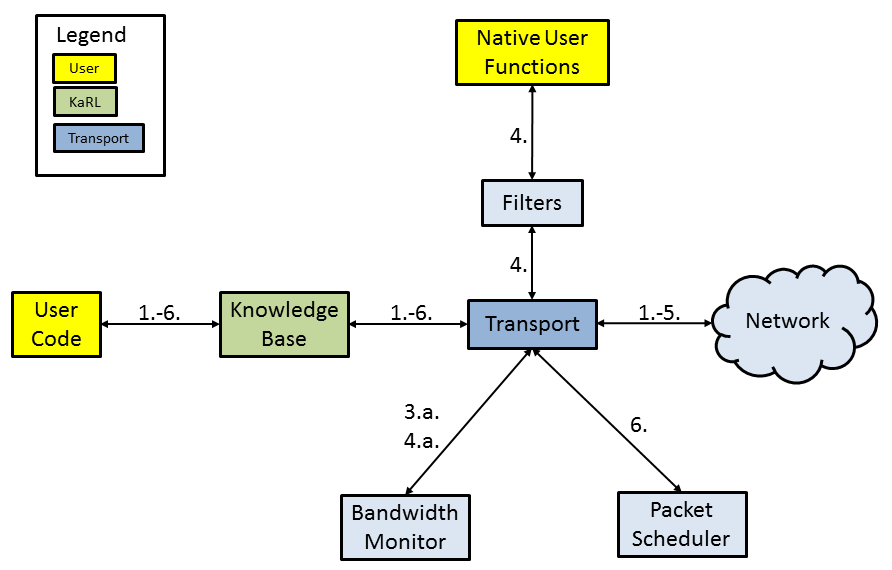

User callbacks can also be inserted into the transport layer to modify payloads. The MADARA transport system allows users to insert callbacks into three key operations: receive, send, and rebroadcast.

Filter callbacks for send/receive/rebroadcast events are given a number of arguments that are relevant to the filtering operation. As of version 1.1.9, these arguments include the following:

- args

[0]: The knowledge record that the filter is acting upon - args

[1]: The name of the knowledge record, if applicable ("" if unnamed, but this should never happen) - args

[2]: The type of operation calling the filter (integer valued). Valid types are:madara::transport::TransportContext::IDLE_OPERATION(should never see),madara::transport::TransportContext::SENDING_OPERATION(transport is trying to send the record),madara::transport::TransportContext::RECEIVING_OPERATION(transport has received the record and is ready to apply the update),madara::transport::TransportContext::REBROADCASTING_OPERATION(transport is trying to rebroadcast the record -- only happens if rebroadcast is enabled in Transport Settings) - args

[3]: Bandwidth used while sending through this transport, measured in bytes per second. - args

[4]: Bandwidth used while receiving from this transport, measured in bytes per second. - args

[5]: Message timestamp (when the message was originally sent, in seconds) - args

[6]: Current timestamp (the result of time (NULL)) - args

[7]: Knowledge Domain (partition of the knowledge updates) - args

[8]: Originator (identifier of sender, usually host:port)

The filter can add data to the payload by pushing a variable name (string) followed by a value, which can be a double, string, integer, byte array, or array of integers or doubles, just as you would do with a set operation. This can be useful if other reasoners in the network are expecting additional meta data for the update (which they are free to strip out or ignore in a receive filter, if they don't need the information).

The filter can also access any variable in the KnowledgeBase through the Variables facade. With the arguments and variables interfaces, developers can respond to transport events in highly dynamic and extensible ways.

To create a bandwidth filter for send/receive/rebroadcast events, we simply need to create a filter that looks at argument index 3 (how much bandwidth we have used sending) or argument index 4 (how much bandwidth is being used--i.e., what we have received in bytes per second), depending on which type of bandwidth enforcement we want to do.

In the following example, we drop any packet members if we are over 100,000 bytes per second sending within the past 10s.

Example of enforcing a send bandwidth limit via filtering

// Do not allow more than 100k bytes per second

madara::knowledge::KnowledgeRecord

enforce_send_limit (

madara::knowledge::FunctionArguments & args,

madara::knowledge::Variables & variables)

{

// by default, we return an empty record, which means remove it

madara::knowledge::KnowledgeRecord result;

if (args.size () > 0)

{

// only modify the return value with arg[0] if we are under 100KB/s

if (args[3].to_integer () < 100000)

{

result = args[0];

}

}

return result;

}

int main (int argc, char ** argv)

{

// Create transport settings for a multicast transport

madara::transport::QoSTransportSettings settings;

settings.hosts_.push_back ("239.255.0.1:4150");

settings.type = madara::transport::MULTICAST;

// add the above filter for all file types, applied before sending

settings.add_send_filter (madara::knowledge::KnowledgeRecord::ALL_TYPES,

enforce_send_limit);

settings.add_rebroadcast_filter (madara::knowledge::KnowledgeRecord::ALL_TYPES,

enforce_send_limit);

// create a knowledge base with the multicast transport settings

madara::knowledge::KnowledgeBase knowledge ("agent1", settings);

// do normal reasoning, read files, or whatever other logic is needed

...

return 0;

}

Filters can inspect argument indices 5 and 6 for information on the sent and received time for each packet. The difference between these two arguments is called the packet latency, and this latency value can inform the filter of deadline violations.

Example of enforcing a network latency deadline via filtering

madara::knowledge::KnowledgeRecord

filter_deadlines (

madara::knowledge::FunctionArguments & args,

madara::knowledge::Variables & vars)

{

madara::knowledge::KnowledgeRecord result;

if (args.size () ># 7)

{

// args[5] is sent time, args[6] is current time

// keep any packet with a latency of less than 5 seconds

if (args[6].to_integer () - args[5].to_integer () < 5)

{

result = args[0];

}

}

return result;

}

int main (int argc, char ** argv)

{

// Create transport settings for a multicast transport

madara::transport::QoSTransportSettings settings;

settings.hosts_.push_back ("239.255.0.1:4150");

settings.type = madara::transport::MULTICAST;

// add the above filter for all file types, applied before sending

settings.add_receive_filter (madara::knowledge::Knowledge_Record::ALL_TYPES,

filter_deadlines);

// create a knowledge base with the multicast transport settings

madara::knowledge::KnowledgeBase knowledge ("", settings);

// do normal reasoning, read files, or whatever other logic is needed

...

return 0;

}

Filters that drop packets can be very useful, but developers also have unlimited options for inflating or reducing packets and updates within the packets. Packet shaping is any operation that mutates a packet element into a different form. This can be operations like converting packets into XML, resizing an image payload, or even encrypting part of a packet with a private key.

In the following example, we simply encapsulate any string payload with the xml elements <item> and </item>.

Example of shaping a payload before it gets sent out

#include <sstream>

#include "madara/knowledge/KnowledgeBase.h"

madara::knowledge::KnowledgeRecord

add_item_tag (

madara::knowledge::FunctionArguments & args,

madara::knowledge::Variables & vars)

{

madara::knowledge::KnowledgeRecord result;

// if we have an arg and it is a string value

if (args.size () > 0 && args[0].is_string_type ())

{

// encase it in an <item> tag

std::stringstream buffer;

buffer << "<item>";

buffer << args[0].to_string ();

buffer << "</item>";

result.set_value (buffer.str ());

}

return result;

}

int main (int argc, char ** argv)

{

// Create transport settings for a multicast transport

madara::transport::QoSTransportSettings settings;

settings.hosts_.push_back ("239.255.0.1:4150");

settings.type = madara::transport::MULTICAST;

// add the above filter to string types

settings.add_send_filter (madara::knowledge::KnowledgeRecord::STRING,

add_item_tag);

// create a knowledge base with the multicast transport settings

madara::knowledge::KnowledgeBase knowledge ("", settings);

// each of these will be encased in an item tag after filtering

knowledge.set ("name", "John Smith");

knowledge.set ("occupation", "Banker");

// each of these will not be encased because they are not strings

knowledge.set ("age", madara::knowledge::KnowledgeRecord::Integer (43));

knowledge.set ("money", 553200.50);

return 0;

}

Aggregate filters are the preferred way of creating callbacks for send/receive/rebroadcast events on the networking transport. They differ from individual record filters in that aggregate filters take the entire map of records, are generally more efficient, and are more flexible.

There are several examples of aggregate filters in the source repository that may help with seeing how these are implemented. A good instance is the PeerDiscovery filter for maintaining a list of connected agents over a MADARA transport.

PeerDiscovery Filter: Header | Source | API Documentation

Using the PeerDiscovery filter (or any aggregate filter similarly extended from madara::filters::AggregateFilter) is as easy as adding it as a receive filter. Other filters can be added as send or rebroadcast filters, as appropriate.

#include "madara/filters/PeerDiscovery.h"

int main (int argc, char ** argv)

{

// Create transport settings for a multicast transport

madara::transport::QoSTransportSettings settings;

settings.hosts_.push_back ("239.255.0.1:4150");

settings.type = madara::transport::MULTICAST;

// add peer discovery to on receive events

madara::filters::PeerDiscovery newFilter;

settings.add_receive_filter (&newFilter);

// create a knowledge base with the multicast transport settings

madara::knowledge::KnowledgeBase knowledge ("", settings);

// the filter will be called whenever we receive anything. Nothing else required.

...

return 0;

}

Buffer Filters are filters for encoding and decoding to and from a character buffer. An example implementation of a Buffer Filter is the

AESBufferFilter: Header | Source

// to use the AESBufferFilter, you'll need to compile with SSL support

#include "madara/filters/ssl/AESBufferFilter.h"

namespace filters = madara::filters;

namespace transport = madara::transport;

namespace knowledge = madara::knowledge;

int main (int argc, char ** argv)

{

// Create transport settings for a multicast transport

transport::QoSTransportSettings settings;

settings.hosts_.push_back ("239.255.0.1:4150");

settings.type = madara::transport::MULTICAST;

// encrypt all data with the shared key "testPassword#214"

filters::AESBufferFilter encryption;

encryption.generate_key ("testPassword#214");

// add AES encryption for all sent and received knowledge

settings.add_send_filter (encryption);

settings.add_receive_filter (encryption);

// create a knowledge base with the multicast transport settings

knowledge::KnowledgeBase knowledge ("", settings);

// any knowledge sent will be encrypted by the buffer filter

// any knowledge received will be decrypted by the buffer filter

...

return 0;

}

MADARA transports can be configured to trust or ban lists of peers. The banned list can be useful for mitigating known faulty sensors that are flooding the network as well as enforcing basic security. The accept list is more useful for security policies and is significantly more stringent. Once a node is added to the trusted list, anything not on the trusted list will be automatically denied any update abilities to the local context.

Example of adding peers to trusted list

// only trust the agents "agent2" and "agent3"

madara::transport::QoSTransportSettings settings;

settings.add_trusted_peer ("agent2");

settings.add_trusted_peer ("agent3");

// others could add "agent1" to their trusted list

madara::knowledge::KnowledgeBase knowledge ("agent1", settings);

The above code has agent1 set his unique identifier to "agent1". Let's show how we could make a peer that doesn't trust knowledge from the above entity.

Example of adding peers to banned list

// Do not trust "agent1"

madara::transport::QoSTransportSettings settings;

settings.add_banned_peer ("agent1");

// others could add "agent1" to their trusted list

madara::knowledge::KnowledgeBase knowledge ("agent2", settings);

Before deploying MADARA applications into real-world, real-time situations (especially wireless situations), developers should probably test that their applications work despite packet loss. MADARA provides first class support for dropping packets at deterministic and random intervals.

The deterministic policy is enforced via a stride scheduler within the Packet Scheduler.

Example of setting a deterministic non-bursty packet drop policy

madara::transport::QoSTransportSettings settings;

/**

* Set a drop rate of 20% in a deterministic manner (1 drop, then 4 successful sends)

**/

settings.update_drop_rate (.2, madara::transport::PACKET_DROP_DETERMINISTIC);

madara::knowledge::KnowledgeBase knowledge ("", settings);

Burst usage

When updating a drop rate, you can specify a drop burst type. Bursts are sequences of drops in a row that may prove useful when trying to mimic real world drop patterns. Burst rates can cause unusual patterns within the deterministic policy settings that may cause a higher rate than intended.

Example of setting a deterministic bursty packet drop policy

madara::transport::QoS_Transport_Settings settings;

/**

* Set a drop rate of 20% in a deterministic manner with 2 successive drops (burst rate 2)

**/

settings.update_drop_rate (.2, madara::transport::PACKET_DROP_DETERMINISTIC, 2);

madara::knowledge::KnowledgeBase knowledge ("", settings);

The probablistic policy enforces a uniform distribution of drops at a target rate.

Normal usage

The following example shows how to set a 20% drop rate in a probablistic manner without scheduled bursts.

Example of setting a deterministic non-bursty packet drop policy

madara::transport::QoSTransportSettings settings;

// Set a drop rate of 20% in a probablistic manner

settings.update_drop_rate (.2, madara::transport::PACKET_PROBABLISTIC);

madara::knowledge::KnowledgeBase knowledge ("", settings);

Burst usage

When updating a drop rate, you can specify a drop burst type. Bursts are sequences of drops in a row that may prove useful when trying to mimic real world drop patterns.

Example of setting a deterministic bursty packet drop policy

madara::transport::QoSTransportSettings settings;

/**

* Set a drop rate of 20% in a deterministic manner with 2 successive drops (burst rate 2)

**/

settings.update_drop_rate (.2, madara::transport::PACKET_DROP_PROBABLISTIC, 2);

madara::knowledge::KnowledgeBase knowledge ("", settings);

Domains are partitions of the knowledge space that allow for isolation of knowledge transfer and learning. With domains, you can establish cliques of knowledge transfer and essentially sculpt how knowledge gets transferred over the network.

If you are familiar with pub/sub architectures, domains work a lot like topics in most other middlewares like DDS. However, unlike other pub/sub architectures, domains allow for sampling multiple topics. Essentially, each agent can sample from multiple domains, which allows programmers to setup group-based transfer in a single transport.

As of version 2.9.17, domains are split into two types within the TransportSettings class: 1) write domain and 2) read domains. The write domain is the domain that all knowledge will be tagged with when it goes over the transport. Read domains is a map of all domains relevant to this agent. By default, an empty read domains list includes the write domain. This allows the agent to simply assign a write domain and the agent will also read knowledge from other agents. However, the read domains map is separate from the write domain entry, and you are free to make the agent write on one domain that it cannot read from (i.e., you can specify that the agent is reading from domains that it is not writing to).

Example of setting a unique write domain

madara::transport::QoSTransportSettings settings;

// by default, this also means we read knowledge on domain team1

settings.write_domain = "team1";

madara::knowledge::KnowledgeBase knowledge ("", settings);

Example of setting unique read domains

madara::transport::QoSTransportSettings settings;

// Set the write domain to team1

settings.write_domain = "team1";

// set read domains to be team1 knowledge and knowledge from officials

settings.add_read_domain("team1");

settings.add_read_domain("officials");

/**

* note that if we had only set "officials" above, then external updates to "team1" would

* not be applied to our knowledge base

**/

madara::knowledge::KnowledgeBase knowledge ("", settings);

As implied in the above examples, domains are a powerful tool that can be useful for agent teaming and separating knowledge without using multiple transports. However, we currently only plan to allow one write domain per transport, so if you want to replicate knowledge to multiple domains, you will need to add an identical transport, each with a different write domain. If doing this, it is best practice to enable the

Mutated knowledge is aggregated and sent over the network transports whenever you call set, evaluate, wait, or send_modifieds. This can be a bit non-intuitive for large application developers who just want many variables and then send everything. You can delay sending modifieds by setting the delay_sending_modifieds member to true when providing the madara::knowledge::EvalSettings or madara::knowledge::WaitSettings classes to the set, evaluate, and wait functions on the KnowledgeBase.

The preferred way to aggregate knowledge in a larger application, especially, is to use Knowledge Containers. Containers are object-oriented abstractions that point to a specific variable inside of the KnowledgeBase. Most of these Containers are extremely fast, O(1) lookups and they also suppress sends over the network during set-like operations. Basically, using Containers, you can quickly set/get values and build knowledge aggregations without sending over the network. When you're ready to send, you call KnowledgeBase::send_modifieds. See the Containers wiki for more information and examples.

If you are looking for code examples and guides, your best bet would be to start with the tutorials (located in the tutorials directory of the MADARA root directory--see the README.txt file for descriptions). After that, there are many dozens of tests that showcase and profile the many functions, classes, and functionalities available to MADARA users.

Users may also browse the Library Documentation for all MADARA functions, classes, etc. and the Wiki pages on this website.

First Steps in MADARA: Covers installation and a Hello World program.

Intro to Networking: Covers creating a multicast transport and coordination and image sharing between multiple agents.

C++ Guide Series

Architecture | Knowledge Base | Networking | Containers | Threads | Optimizations | KaRL | Encryption | Checkpointing | Knowledge Performance | Logging