- includes application of grid search, SMOTE sampling, and visualization using principal component analysis

- data used is from UCI Machine Learning Repository

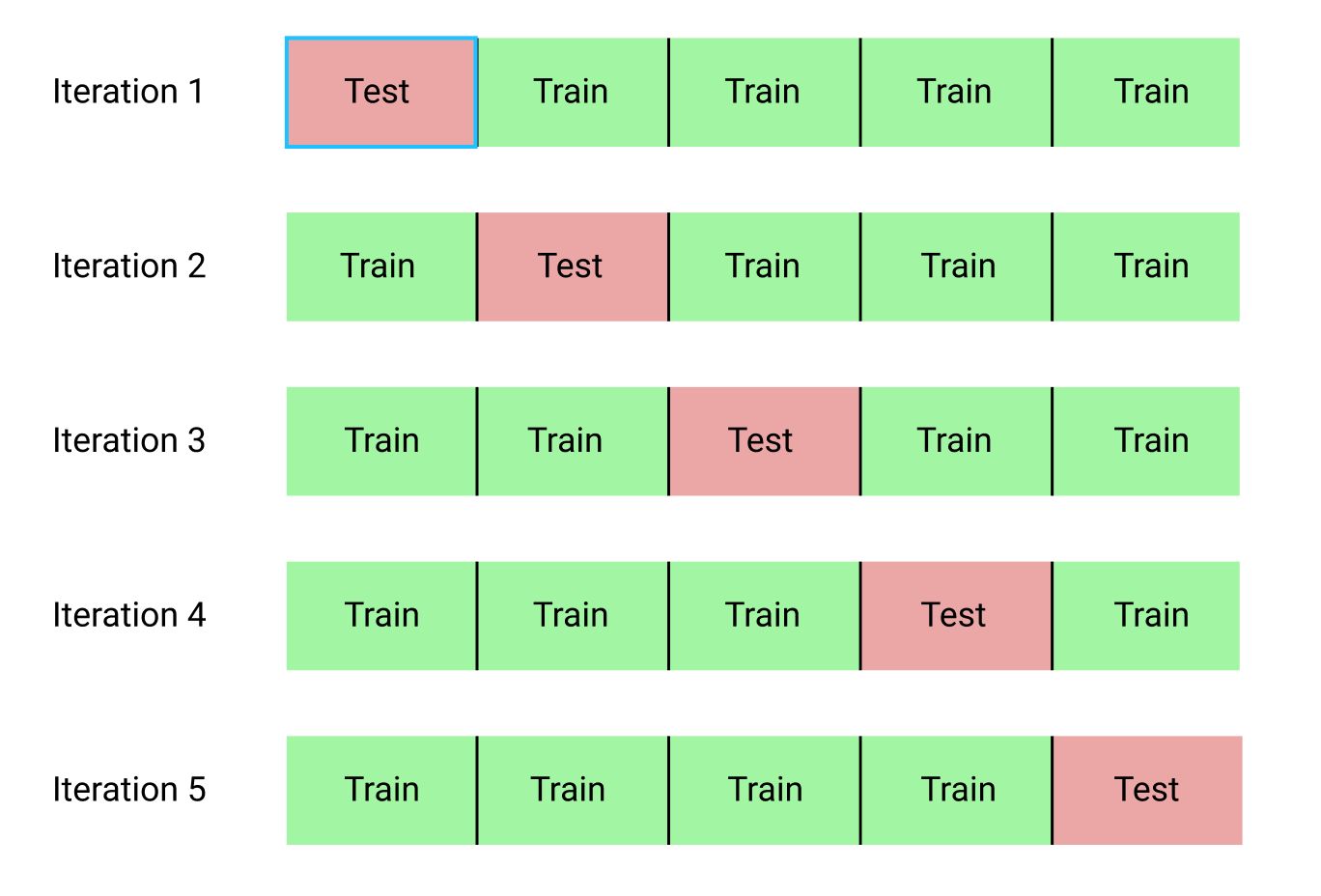

For most models, k-fold cross validation was performed with grid search to find optimal model parameters.

- read about k-fold cross validation at https://towardsdatascience.com/cross-validation-explained-evaluating-estimator-performance-e51e5430ff85 and https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.KFold.html

- read about grid search at https://scikit-learn.org/stable/modules/generated/sklearn.model_selection.GridSearchCV.html and https://towardsdatascience.com/using-3d-visualizations-to-tune-hyperparameters-of-ml-models-with-python-ba2885eab2e9

XGBoost, or extreme gradient boosting, is a top machine learning model and the dominating algorithm among competitions.

- supports many tunings parameters such as learning rate and early stopping

- open source code and download at https://pypi.org/project/xgboost/

- paper available at https://arxiv.org/abs/1603.02754 by Tianqi Chen

- as a tree boosting algorithm, minimal data-preprocessing is needed (i.e. data normalization/scaling)

- RFC utilizes multiple decision trees and averages between them to make predictions

- documentation to sklearn's RFC at https://scikit-learn.org/stable/modules/generated/sklearn.ensemble.RandomForestClassifier.html

- again, as a tree based model, RFC requires minimal data preprocessing

- note PCA was used to bring dimensionality down to 2 in order to plot the hyperplane

- support vector machines classifies models by finding a hyperplane to separate classes while maximizing the margin distance from the classes

- as a distance based method, SVM requires data normalization to ensure no features take precendence over others

- documentation at https://scikit-learn.org/stable/modules/generated/sklearn.svm.SVC.html#sklearn.svm.SVC

- again, PCA was used to bring dimensionality down to 2 for plotting purposes

- documentation at https://scikit-learn.org/stable/modules/generated/sklearn.neighbors.KNeighborsClassifier.html

- simple deep learning model used with residual connections similar to Resnet v1 to ensure "deep" networks train, at a minimum, as well as "shallow" networks

- the goal of deep learning is to calculate weights and biases within the network using gradients and error functions

- documentation at https://keras.io/