-

Notifications

You must be signed in to change notification settings - Fork 33

New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

Node 10: log entries appear as string in textPayload, not structured in jsonPayload #291

Comments

|

Hi @mickdekkers. Looking at the screenshot, the 'actual' screenshot seems to showing the console log stream rather the bunyan log stream. Observe the Can you recheck the Cloud Logging UI again to make sure you're looking at the |

|

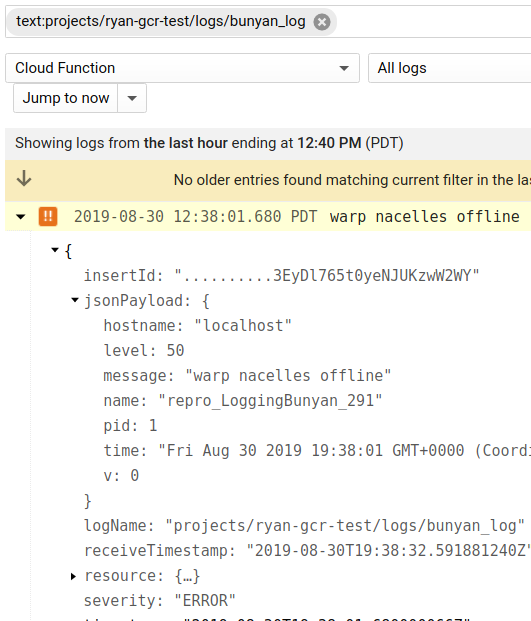

Hi @ofrobots, thanks for your reply. It looks like you're right: I wasn't looking at the I looked into this some more and found something interesting: the For Node 8, the For Node 10, the To illustrate this, I took a screenshot of the cloud function resource in the log viewer after:

As you can see, the Node 10 logs appear as unstructured text in this view, while the Node 8 logs appear structured. The Node 10 structured logs are available under the I'm not sure if this is an issue with the bunyan integration or the Stackdriver log viewer UI itself, but I thought I'd report it either way. |

|

It looks like the next steps that we need to investigate is whether or not the global resources is being used only in Node 10 for logs. |

|

@mickdekkers I can reproduce the behavior you describe. I'm investigating. |

|

The root cause is that nodejs10 runtime no longer exposes the |

|

Just an update - we are continuing to look into this and trying to figure out the best way to resolve the issue. The nodejs10 runtime on GCF now follows the container runtime contract. We need to update code in |

|

Any updates on this?. It is really annoying trying to read the logs from the log console looking like this. |

|

so. this example contains both a stdout stream and a logging stream. if you search for the log by the logs "name" you get the correct result. this seems unexpected where we thought the structured log output should go to the same log in the google cloud dashboard as the stdout stream. (projects/ryan-gcr-test/logs/cloudfunctions.googleapis.com%2Fcloud-function) the log name we passed into the bunyan constructor also looks nothing like the logName it ended up with in the logging ui. |

|

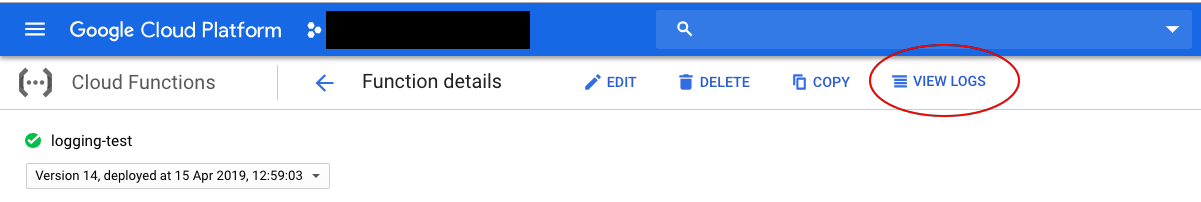

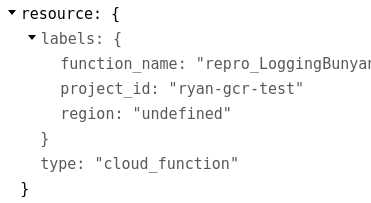

looks like we can configure this. so if we pass the and remove the stdout log to prevent duplicates things should be back to NEARLY making the result you expect. there is still a problem where GOOGLE_CLOUD_REGION and FUNCTION_REGION aren't defined in node 10 cloud functions. this results in a resource with an undefined region. https://github.com/googleapis/nodejs-logging/blob/master/src/metadata.ts#L41 this means that from the function dashboard if you click view logs you'll be taken to a filter that excludes the logs from logging bunyan. default filter: There doesnt seem to be a way to query the region from the metadata service in the node 10 and cloud run environment. the mtadata service does respond to "zone" with something like the only work around i can think of at the moment is to specify the GOOGLE_CLOUD_REGION env var in your function deployment so the resource is constructed correctly. the work to fix this is out of scope for this repo and could be managed at quite a few levels.

4 is probably the best because sending logs in cloud function was always a bit of a race condition so a blocking write to a collector is needed to ensure logs aren't lost when functions are shutdown. companion issue on logging-winston googleapis/nodejs-logging-winston#333 |

|

Hello, Just FYI, I am having the same issue using Go in a cloud function. |

|

If you need consistent log names and attributes across runtimes you'll need to manually provide the resource metadata rather than depending on the environment of the runtime to auto populate the attributes you need. see this comment above for details. this is not the most satisfactory resolution but there are too many run times and your log data is much more valuable when collated along consistent attributes. |

Was #4 actually completed? I'm wondering if there's a way to simply write to stdout (or use console.log/info/warn) and still see structured output (so we don't need to wait for network traffic to Stackdriver to complete before exiting a cloud function). |

|

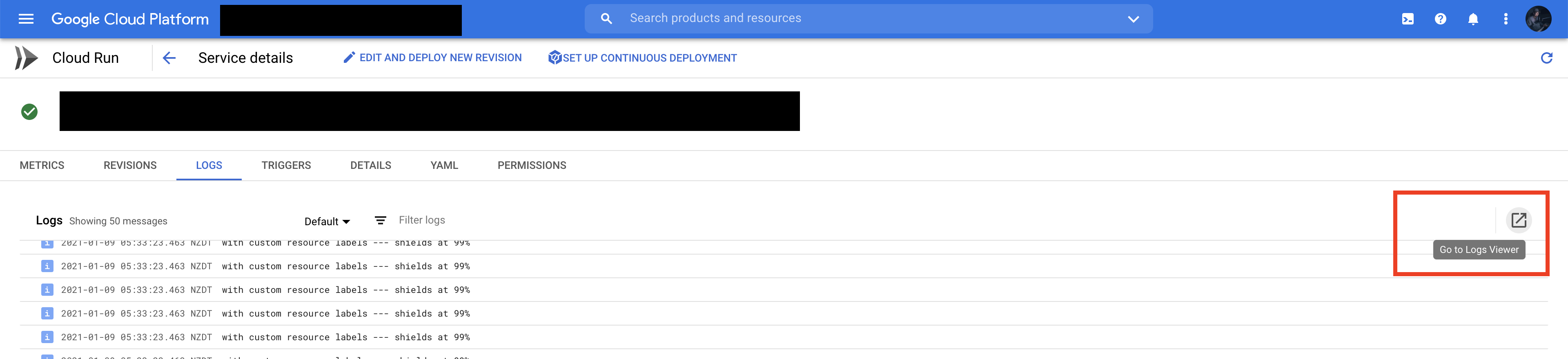

This is what worked for me to make sure logs show up in their desired location and not just the global view: const serviceName = `service-authentication`

const loggingBunyan = new LoggingBunyan({

resource: {

type: `cloud_run_revision`,

labels: {

service_name: serviceName,

location: `australia-southeast1`,

},

},

})

const bunyanLogger = createLogger({

name: `authentication-service`,

streams: [

// Log to the console at 'info' and above

{ stream: process.stdout, level: `debug` },

// And log to Cloud Logging, logging at 'info' and above

loggingBunyan.stream(`debug`),

],

})The key part here is adding the custom resource labels so it shows up in your desired logging location. For me I was running my application using cloud run and I found out by clicking the "open in stackdriver/log viewer" button that the query it was making was: So after adding these resource properties to my bunyan configuration, they appeared in the cloud run logging view when previously they only appeared in the global view. |

Environment details

@google-cloud/logging-bunyanversion: 0.10.1bunyanversion: 1.8.12Steps to reproduce

{ "name": "sample-http", "version": "0.0.1", "dependencies": { "@google-cloud/logging-bunyan": "0.10.1", "bunyan": "1.8.12" } }Expected result

Actual result

Additional information

The issue does not appear when I set the runtime to Node 6, nor when I set the runtime to Node 8. The expected result screenshot is the output of Node 6.

The text was updated successfully, but these errors were encountered: