Releases: autogluon/autogluon

v0.8.3

What's Changed

v0.8.3 is a patch release to address security vulnerabilities.

See the full commit change-log here: 0.8.2...0.8.3

This version supports Python versions 3.8, 3.9, and 3.10.

Changes

v1.1.0

Version 1.1.0

We're happy to announce the AutoGluon 1.1 release.

AutoGluon 1.1 contains major improvements to the TimeSeries module, achieving a 60% win-rate vs AutoGluon 1.0 through the addition of Chronos, a pretrained model for time series forecasting, along with numerous other enhancements. The other modules have also been enhanced through new features such as Conv-LORA support and improved performance for large tabular datasets between 5 - 30 GB in size. For a full breakdown of AutoGluon 1.1 features, please refer to the feature spotlights and the itemized enhancements below.

Join the community:

Get the latest updates:

This release supports Python versions 3.8, 3.9, 3.10, and 3.11. Loading models trained on older versions of AutoGluon is not supported. Please re-train models using AutoGluon 1.1.

This release contains 125 commits from 20 contributors!

Full Contributor List (ordered by # of commits):

@shchur @prateekdesai04 @Innixma @canerturkmen @zhiqiangdon @tonyhoo @AnirudhDagar @Harry-zzh @suzhoum @FANGAreNotGnu @nimasteryang @lostella @dassaswat @afmkt @npepin-hub @mglowacki100 @ddelange @LennartPurucker @taoyang1122 @gradientsky

Special thanks to @ddelange for their continued assistance with Python 3.11 support and Ray version upgrades!

Spotlight

AutoGluon Achieves Top Placements in ML Competitions!

AutoGluon has experienced wide-spread adoption on Kaggle since the AutoGluon 1.0 release.

AutoGluon has been used in over 130 Kaggle notebooks and mentioned in over 100 discussion threads in the past 90 days!

Most excitingly, AutoGluon has already been used to achieve top ranking placements in multiple competitions with thousands of competitors since the start of 2024:

| Placement | Competition | Author | Date | AutoGluon Details | Notes |

|---|---|---|---|---|---|

| 🥉 Rank 3/2303 (Top 0.1%) | Steel Plate Defect Prediction | Samvel Kocharyan | 2024/03/31 | v1.0, Tabular | Kaggle Playground Series S4E3 |

| 🥈 Rank 2/93 (Top 2%) | Prediction Interval Competition I: Birth Weight | Oleksandr Shchur | 2024/03/21 | v1.0, Tabular | |

| 🥈 Rank 2/1542 (Top 0.1%) | WiDS Datathon 2024 Challenge #1 | lazy_panda | 2024/03/01 | v1.0, Tabular | |

| 🥈 Rank 2/3746 (Top 0.1%) | Multi-Class Prediction of Obesity Risk | Kirderf | 2024/02/29 | v1.0, Tabular | Kaggle Playground Series S4E2 |

| 🥈 Rank 2/3777 (Top 0.1%) | Binary Classification with a Bank Churn Dataset | lukaszl | 2024/01/31 | v1.0, Tabular | Kaggle Playground Series S4E1 |

| Rank 4/1718 (Top 0.2%) | Multi-Class Prediction of Cirrhosis Outcomes | Kirderf | 2024/01/01 | v1.0, Tabular | Kaggle Playground Series S3E26 |

We are thrilled that the data science community is leveraging AutoGluon as their go-to method to quickly and effectively achieve top-ranking ML solutions! For an up-to-date list of competition solutions using AutoGluon refer to our AWESOME.md, and don't hesitate to let us know if you use AutoGluon in a competition!

Chronos, a pretrained model for time series forecasting

AutoGluon-TimeSeries now features Chronos, a family of forecasting models pretrained on large collections of open-source time series datasets that can generate accurate zero-shot predictions for new unseen data. Check out the new tutorial to learn how to use Chronos through the familiar TimeSeriesPredictor API.

General

- Refactor project README & project Tagline @Innixma (#3861, #4066)

- Add AWESOME.md competition results and other doc improvements. @Innixma (#4023)

- Pandas version upgrade. @shchur @Innixma (#4079, #4089)

- PyTorch, CUDA, Lightning version upgrades. @prateekdesai04 @canerturkmen @zhiqiangdon (#3982, #3984, #3991, #4006)

- Ray version upgrade. @ddelange @tonyhoo (#3774, #3956)

- Scikit-learn version upgrade. @prateekdesai04 (#3872, #3881, #3947)

- Various dependency upgrades. @Innixma @tonyhoo (#4024, #4083)

TimeSeries

Highlights

AutoGluon 1.1 comes with numerous new features and improvements to the time series module. These include highly requested functionality such as feature importance, support for categorical covariates, ability to visualize forecasts, and enhancements to logging. The new release also comes with considerable improvements to forecast accuracy, achieving 60% win rate and 3% average error reduction compared to the previous AutoGluon version. These improvements are mostly attributed to the addition of Chronos, improved preprocessing logic, and native handling of missing values.

New Features

- Add Chronos pretrained forecasting model (tutorial). @canerturkmen @shchur @lostella (#3978, #4013, #4052, #4055, #4056, #4061, #4092, #4098)

- Measure the importance of features & covariates on the forecast accuracy with

TimeSeriesPredictor.feature_importance(). @canerturkmen (#4033, #4087) - Native missing values support (no imputation required). @shchur (#3995, #4068, #4091)

- Add support for categorical covariates. @shchur (#3874, #4037)

- Improve inference speed by persisting models in memory with

TimeSeriesPredictor.persist(). @canerturkmen (#4005) - Visualize forecasts with

TimeSeriesPredictor.plot(). @shchur (#3889) - Add

RMSLEevaluation metric. @canerturkmen (#3938) - Enable logging to file. @canerturkmen (#3877)

- Add option to keep lightning logs after training with

keep_lightning_logshyperparameter. @shchur (#3937)

Fixes and Improvements

- Automatically preprocess real-valued covariates @shchur (#4042, #4069)

- Add option to skip model selection when only one model is trained. @shchur (#4002)

- Ensure all metrics handle missing values in target @shchur (#3966)

- Fix bug when loading a GPU trained model on a CPU machine @shchur (#3979)

- Fix inconsistent random seed. @canerturkmen @shchur (#3934, #4099)

- Fix crash when calling .info after load. @afmkt (#3900)

- Fix leaderboard crash when no models trained. @shchur (#3849)

- Add prototype TabRepo simulation artifact generation. @shchur (#3829)

- Fix refit_full bug. @shchur (#3820)

- Documentation improvements, hide deprecated methods. @shchur (#3764, #4054, #4098)

- Minor fixes. @canerturkmen, @shchur, @AnirudhDagar (#4009, #4040, #4041, #4051, #4070, #4094)

AutoMM

Highlights

AutoMM 1.1 introduces the innovative Conv-LoRA, a parameter-efficient fine-tuning (PEFT) method stemming from our latest paper presented at ICLR 2024, titled "Convolution Meets LoRA: Parameter Efficient Finetuning for Segment Anything Model". Conv-LoRA is designed for fine-tuning the Segment Anything Model, exhibiting superior performance compared to previous PEFT approaches, such as LoRA and visual prompt tuning, across various semantic segmentation tasks in diverse domains including natural images, agriculture, remote sensing, and healthcare. Check out our Conv-LoRA example.

New Features

- Added Conv-LoRA, a new parameter efficient fine-tuning method. @Harry-zzh @zhiqiangdon (#3933, #3999, #4007, #4022, #4025)

- Added support for new column type: 'image_base64_str'. @Harry-zzh @zhiqiangdon (#3867)

- Added support for loading pre-trained weights in FT-Transformer. @taoyang1122 @zhiqiangdon (#3859)

Fixes and Improvements

- Fixed bugs in semantic segmentation. @Harry-zzh (#3801, #3812)

- Fixed crashes when using F1 metric. @suzhoum (#3822)

- Fixed bugs in PEFT methods. @Harry-zzh (#3840)

- Accelerated object detection training by ~30% for the high_quality and best_quality presets. @FANGAreNotGnu (#3970)

- Depreciated Grounding-DINO @FANGAreNotGnu (#3974)

- Fixed lightning upgrade issues @zhiqiangdon (#3991)

- Fixed using f1, f1_macr...

v1.0.0

Version 1.0.0

Today is finally the day... AutoGluon 1.0 has arrived!! After over four years of development and 2061 commits from 111 contributors, we are excited to share with you the culmination of our efforts to create and democratize the most powerful, easy to use, and feature rich automated machine learning system in the world.

AutoGluon 1.0 comes with transformative enhancements to predictive quality resulting from the combination of multiple novel ensembling innovations, spotlighted below. Besides performance enhancements, many other improvements have been made that are detailed in the individual module sections.

This release supports Python versions 3.8, 3.9, 3.10, and 3.11. Loading models trained on older versions of AutoGluon is not supported. Please re-train models using AutoGluon 1.0.

This release contains 223 commits from 17 contributors!

Full Contributor List (ordered by # of commits):

@shchur, @zhiqiangdon, @Innixma, @prateekdesai04, @FANGAreNotGnu, @yinweisu, @taoyang1122, @LennartPurucker, @Harry-zzh, @AnirudhDagar, @jaheba, @gradientsky, @melopeo, @ddelange, @tonyhoo, @canerturkmen, @suzhoum

Join the community:

Get the latest updates:

Spotlight

Tabular Performance Enhancements

AutoGluon 1.0 features major enhancements to predictive quality, establishing a new state-of-the-art in Tabular modeling. To the best of our knowledge, AutoGluon 1.0 marks the largest leap forward in the state-of-the-art for tabular data since the original AutoGluon paper from March 2020. The enhancements come primarily from two features: Dynamic stacking to mitigate stacked overfitting, and a new learned model hyperparameters portfolio via Zeroshot-HPO, obtained from the newly released TabRepo ensemble simulation library. Together, they lead to a 75% win-rate compared to AutoGluon 0.8 with faster inference speed, lower disk usage, and higher stability.

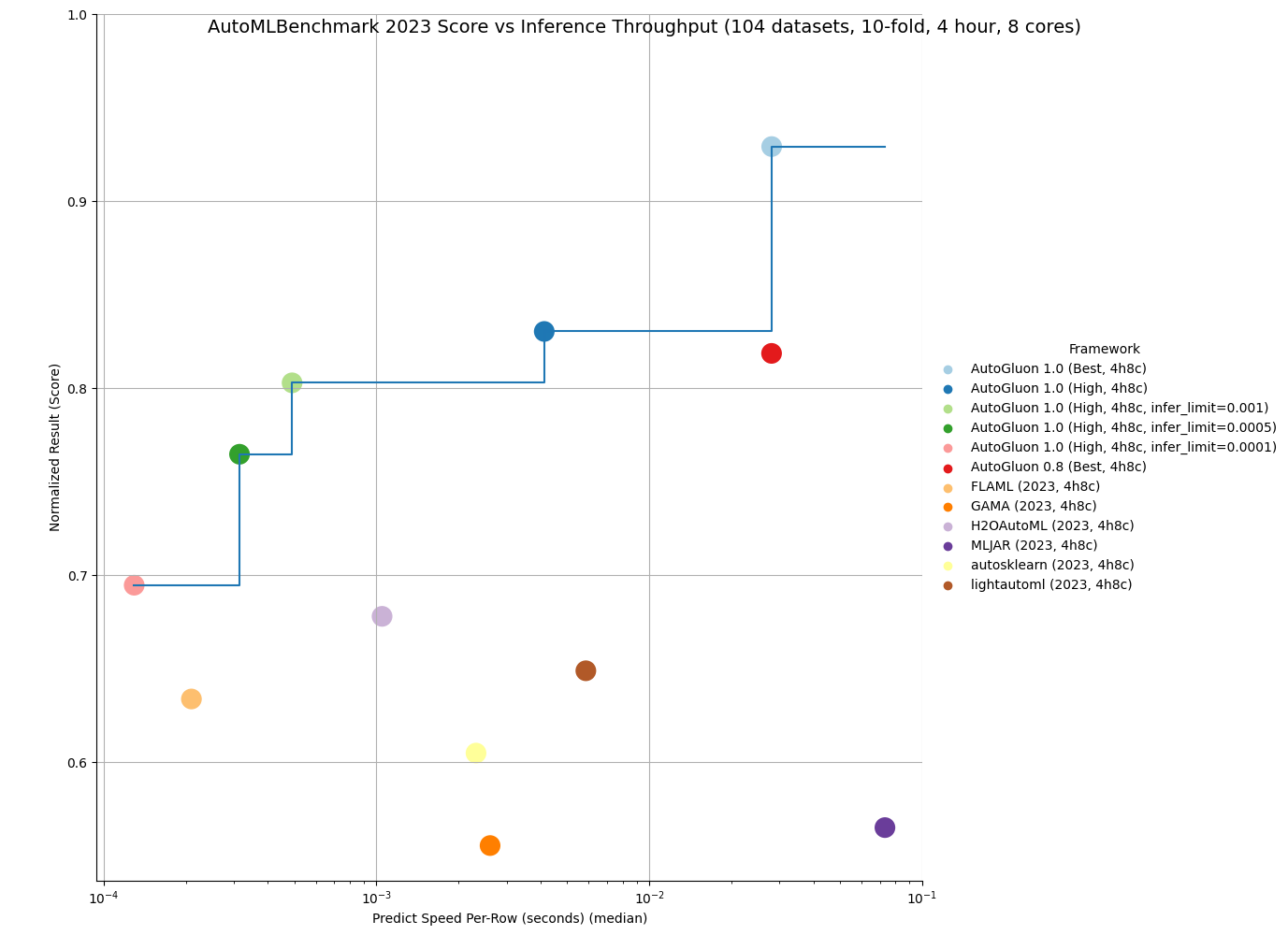

AutoML Benchmark Results

OpenML released the official 2023 AutoML Benchmark results on November 16th, 2023. Their results show AutoGluon 0.8 as the state-of-the-art in AutoML systems across a wide variety of tasks: "Overall, in terms of model performance, AutoGluon consistently has the highest average rank in our benchmark." We now showcase that AutoGluon 1.0 achieves far superior results even to AutoGluon 0.8!

Below is a comparison on the OpenML AutoML Benchmark across 1040 tasks. LightGBM, XGBoost, and CatBoost results were obtained via AutoGluon, and other methods are from the official AutoML Benchmark 2023 results. AutoGluon 1.0 has a 95%+ win-rate against traditional tabular models, including a 99% win-rate vs LightGBM and a 100% win-rate vs XGBoost. AutoGluon 1.0 has between an 82% and 94% win-rate against other AutoML systems. For all methods, AutoGluon is able to achieve >10% average loss improvement (Ex: Going from 90% accuracy to 91% accuracy is a 10% loss improvement). AutoGluon 1.0 achieves first place in 63% of tasks, with lightautoml having the second most at 12% (AutoGluon 0.8 previously took first place 48% of the time). AutoGluon 1.0 even achieves a 7.4% average loss improvement over AutoGluon 0.8!

| Method | AG Winrate | AG Loss Improvement | Rescaled Loss | Rank | Champion |

|---|---|---|---|---|---|

| AutoGluon 1.0 (Best, 4h8c) | - | - | 0.04 | 1.95 | 63% |

| lightautoml (2023, 4h8c) | 84% | 12.0% | 0.2 | 4.78 | 12% |

| H2OAutoML (2023, 4h8c) | 94% | 10.8% | 0.17 | 4.98 | 1% |

| FLAML (2023, 4h8c) | 86% | 16.7% | 0.23 | 5.29 | 5% |

| MLJAR (2023, 4h8c) | 82% | 23.0% | 0.33 | 5.53 | 6% |

| autosklearn (2023, 4h8c) | 91% | 12.5% | 0.22 | 6.07 | 4% |

| GAMA (2023, 4h8c) | 86% | 15.4% | 0.28 | 6.13 | 5% |

| CatBoost (2023, 4h8c) | 95% | 18.2% | 0.28 | 6.89 | 3% |

| TPOT (2023, 4h8c) | 91% | 23.1% | 0.4 | 8.15 | 1% |

| LightGBM (2023, 4h8c) | 99% | 23.6% | 0.4 | 8.95 | 0% |

| XGBoost (2023, 4h8c) | 100% | 24.1% | 0.43 | 9.5 | 0% |

| RandomForest (2023, 4h8c) | 97% | 25.1% | 0.53 | 9.78 | 1% |

Not only is AutoGluon more accurate in 1.0, it is also more stable thanks to our new usage of Ray subprocesses during low-memory training, resulting in 0 task failures on the AutoML Benchmark.

AutoGluon 1.0 is capable of achieving the fastest inference throughput of any AutoML system while still obtaining state-of-the-art results. By specifying the infer_limit fit argument, users can trade off between accuracy and inference speed to meet their needs.

As seen in the below plot, AutoGluon 1.0 sets the Pareto Frontier for quality and inference throughput, achieving Pareto Dominance compared to all other AutoML systems. AutoGluon 1.0 High achieves superior performance to AutoGluon 0.8 Best with 8x faster inference and 8x less disk usage!

You can get more details on the results here.

We are excited to see what our users can accomplish with AutoGluon 1.0's enhanced performance.

As always, we will continue to improve AutoGluon in future releases to push the boundaries of AutoML forward for all.

AutoGluon Multimodal (AutoMM) Highlights in One Figure

AutoMM Uniqueness

AutoGluon Multimodal (AutoMM) distinguishes itself from other open-source AutoML toolboxes like AutosSklearn, LightAutoML, H2OAutoML, FLAML, MLJAR, TPOT and GAMA, which mainly focus on tabular data for classification or regression. AutoMM is designed for fine-tuning foundation models across multiple modalities—image, text, tabular, and document, either individually or combined. It offers extensive capabilities for tasks like classification, regression, object detection, named entity recognition, semantic matching, and image segmentation. In contrast, other AutoML systems generally have limited support for image or text, typically using a few pretrained models like EfficientNet or hand-crafted rules like bag-of-words as feature extractors. AutoMM provides a uniquely comprehensive and versatile approach to AutoML, being the only AutoML system to support flexible multimodality and support for a wide range of tasks. A comparative table detailing support for various data modalities, tasks, and model types is provided below.

| Data | Task | Model | ||||||||||||

|---|---|---|---|---|---|---|---|---|---|---|---|---|---|---|

| image | text | tabular | document | any combination | classification | regression | object detection | semantic matching | named entity recognition | image segmentation | traditional models | deep learning models | foundation models | |

| LightAutoML | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | ✓ | |||||||

| H2OAutoML | ✓ | ✓ | ✓ | ✓ | ||||||||||

| FLAML | ✓ | ✓ | &ch... |

v0.8.2

Version 0.8.2

v0.8.2 is a hot-fix release to pin pydantic version to avoid crashing during HPO

As always, only load previously trained models using the same version of AutoGluon that they were originally trained on.

Loading models trained in different versions of AutoGluon is not supported.

See the full commit change-log here: 0.8.1...0.8.2

This version supports Python versions 3.8, 3.9, and 3.10.

Changes

- codespell: action, config + some typos fixed @yarikoptic @yinweisu (#3323)

- Unpin sentencepiece @zhiqiangdon (#3368)

- Pin pydantic @yinweisu (3370)

v0.8.1

Version 0.8.1

v0.8.1 is a bug fix release.

As always, only load previously trained models using the same version of AutoGluon that they were originally trained on.

Loading models trained in different versions of AutoGluon is not supported.

See the full commit change-log here: 0.8.0...0.8.1

This version supports Python versions 3.8, 3.9, and 3.10.

Changes

Documentation improvements

- Update google analytics property @gidler (#3330)

- Add Discord Link @Innixma (#3332)

- Add community section to website front page @Innixma (#3333)

- Update Windows Conda install instructions @gidler (#3346)

- Add some missing Colab buttons in tutorials @gidler (#3359)

Bug Fixes / General Improvements

- Move PyMuPDF to optional @Innixma @zhiqiangdon (#3331)

- Remove TIMM in core setup @Innixma (#3334)

- Update persist_models max_memory 0.1 -> 0.4 @Innixma (#3338)

- Lint modules @yinweisu (#3337, #3339, #3344, #3347)

- Remove fairscale @zhiqiangdon (#3342)

- Fix refit crash @Innixma (#3348)

- Fix

DirectTabularmodel failing for some metrics; hide warnings produced byAutoARIMA@shchur (#3350) - Pin dependencies @yinweisu (#3358)

- Reduce per gpu batch size for AutoMM high_quality_hpo to avoid out of memory error for some corner cases @zhiqiangdon (#3360)

- Fix HPO crash by setting reuse_actor to False @yinweisu (#3361)

v0.8.0

Version 0.8.0

We're happy to announce the AutoGluon 0.8 release.

NEW:

Note: Loading models trained in different versions of AutoGluon is not supported.

This release contains 196 commits from 20 contributors!

See the full commit change-log here: 0.7.0...0.8.0

Special thanks to @geoalgo for the joint work in generating the experimental tabular Zeroshot-HPO portfolio this release!

Full Contributor List (ordered by # of commits):

@shchur, @Innixma, @yinweisu, @gradientsky, @FANGAreNotGnu, @zhiqiangdon, @gidler, @liangfu, @tonyhoo, @cheungdaven, @cnpgs, @giswqs, @suzhoum, @yongxinw, @isunli, @jjaeyeon, @xiaochenbin9527, @yzhliu, @jsharpna, @sxjscience

AutoGluon 0.8 supports Python versions 3.8, 3.9, and 3.10.

Changes

Highlights

- AutoGluon TimeSeries introduced several major improvements, including new models, upgraded presets that lead to better forecast accuracy, and optimizations that speed up training & inference.

- AutoGluon Tabular now supports calibrating the decision threshold in binary classification (API), leading to massive improvements in metrics such as

f1andbalanced_accuracy. It is not uncommon to seef1scores improve from0.70to0.73as an example. We strongly encourage all users who are using these metrics to try out the new decision threshold calibration logic. - AutoGluon MultiModal introduces two new features: 1) PDF document classification, and 2) Open Vocabulary Object Detection.

- AutoGluon MultiModal upgraded the presets for object detection, now offering

medium_quality,high_quality, andbest_qualityoptions. The empirical results demonstrate significant ~20% relative improvements in the mAP (mean Average Precision) metric, using the same preset. - AutoGluon Tabular has added an experimental Zeroshot HPO config which performs well on small datasets <10000 rows when at least an hour of training time is provided (~60% win-rate vs

best_quality). To try it out, specifypresets="experimental_zeroshot_hpo_hybrid"when callingfit(). - AutoGluon EDA added support for Anomaly Detection and Partial Dependence Plots.

- AutoGluon Tabular has added experimental support for TabPFN, a pre-trained tabular transformer model. Try it out via

pip install autogluon.tabular[all,tabpfn](hyperparameter key is "TABPFN")! You can also try it out via specifyingpresets="experimental_extreme_quality".

General

- General doc improvements @tonyhoo @Innixma @yinweisu @gidler @cnpgs @isunli @giswqs (#2940, #2953, #2963, #3007, #3027, #3059, #3068, #3083, #3128, #3129, #3130, #3147, #3174, #3187, #3256, #3258, #3280, #3306, #3307, #3311, #3313)

- General code fixes and improvements @yinweisu @Innixma (#2921, #3078, #3113, #3140, #3206)

- CI improvements @yinweisu @gidler @yzhliu @liangfu @gradientsky (#2965, #3008, #3013, #3020, #3046, #3053, #3108, #3135, #3159, #3283, #3185)

- New AutoGluon Webpage @gidler @shchur (#2924)

- Support sample_weight in RMSE @jjaeyeon (#3052)

- Move AG search space to common @yinweisu (#3192)

- Deprecation utils @yinweisu (#3206, #3209)

- Update namespace packages for PEP420 compatibility @gradientsky (#3228)

Multimodal

AutoGluon MultiModal (also known as AutoMM) introduces two new features: 1) PDF document classification, and 2) Open Vocabulary Object Detection. Additionally, we have upgraded the presets for object detection, now offering medium_quality, high_quality, and best_quality options. The empirical results demonstrate significant ~20% relative improvements in the mAP (mean Average Precision) metric, using the same preset.

New Features

- PDF Document Classification. See tutorial @cheungdaven (#2864, #3043)

- Open Vocabulary Object Detection. See tutorial @FANGAreNotGnu (#3164)

Performance Improvements

- Upgrade the detection engine from mmdet 2.x to mmdet 3.x, and upgrade our presets @FANGAreNotGnu (#3262)

medium_quality: yolo-s -> yolox-lhigh_quality: yolox-l -> DINO-Res50best_quality: yolox-x -> DINO-Swin_l

- Speedup fusion model training with deepspeed strategy. @liangfu (#2932)

- Enable detection backbone freezing to boost finetuning speed and save GPU usage @FANGAreNotGnu (#3220)

Other Enhancements

- Support passing data path to the fit() API @zhiqiangdon (#3006)

- Upgrade TIMM to the latest v0.9.* @zhiqiangdon (#3282)

- Support xywh output for object detection @FANGAreNotGnu (#2948)

- Fusion model inference acceleration with TensorRT @liangfu (#2836, #2987)

- Support customizing advanced image data augmentation. Users can pass a list of torchvision transform objects as image augmentation. @zhiqiangdon (#3022)

- Add yoloxm and yoloxtiny @FANGAreNotGnu (#3038)

- Add MultiImageMix Dataset for Object Detection @FANGAreNotGnu (#3094)

- Support loading specific checkpoints. Users can load the intermediate checkpoints other than model.ckpt and last.ckpt. @zhiqiangdon (#3244)

- Add some predictor properties for model statistics @zhiqiangdon (#3289)

trainable_parametersreturns the number of trainable parameters.total_parametersreturns the number of total parameters.model_sizereturns the model size measured by megabytes.

Bug Fixes / Code and Doc Improvements

- General bug fixes and improvements @zhiqiangdon @liangfu @cheungdaven @xiaochenbin9527 @Innixma @FANGAreNotGnu @gradientsky @yinweisu @yongxinw (#2939, #2989, #2983, #2998, #3001, #3004, #3006, #3025, #3026, #3048, #3055, #3064, #3070, #3081, #3090, #3103, #3106, #3119, #3155, #3158, #3167, #3180, #3188, #3222, #3261, #3266, #3277, #3279, #3261, #3267)

- General doc improvements @suzhoum (#3295, #3300)

- Remove clip from fusion models @liangfu (#2946)

- Refactor inferring problem type and output shape @zhiqiangdon (#3227)

- Log GPU info including GPU total memory, free memory, GPU card name, and CUDA version during training @zhiqaingdon (#3291)

Tabular

New Features

- Added

calibrate_decision_threshold(tutorial), which allows to optimize a given metric's decision threshold for predictions to strongly enhance the metric score. @Innixma (#3298) - We've added an experimental Zeroshot HPO config, which performs well on small datasets <10000 rows when at least an hour of training time is provided. To try it out, specify

presets="experimental_zeroshot_hpo_hybrid"when callingfit()@Innixma @geoalgo (#3312) - The TabPFN model is now supported as an experimental model. TabPFN is a viable model option when inference speed is not a concern, and the number of rows of training data is less than 10,000. Try it out via

pip install autogluon.tabular[all,tabpfn]! @Innixma (#3270) - Backend support for distributed training, which will be available with the next Cloud module release. @yinweisu (#3054, #3110, #3115, #3131, #3142, #3179, #3216)

Performance Improvements

Other Enhancements

- Add quantile regression support for CatBoost @shchur (#3165)

- Implement quantile regression for LGBModel @shchur (#3168)

- Log to file support @yinweisu (#3232)

- Add support for

included_model_types@yinweisu (#3239) - Add enable_categorical=True support to XGBoost @Innixma (#3286)

Bug Fixes / Code and Doc Improvements

- Cross-OS loading of a fit TabularPredictor should now work properly @yinweisu @Innixma

- General bug fixes and improvements @Innixma @cnpgs @shchur @yinweisu @gradientsky (#2865, #2936, #2990, #3045, #3060, #3069, #3148, #3182, #3199, #3226, #3257, #3259, #3268, #3269, #3287, #3288, #3285, #3293, #3294, #3302)

- Move interpretable logic to InterpretableTabularPredictor @Innixma (#2981)

- Enhance drop_duplicates, enable by default @Innixma (#3010)

- Refactor params_aux & memory checks @Innixma (#3033)

- Raise regression

pred_proba@Innixma (#3240)

TimeSeries

In v0.8 we introduce several major improvements to the Time Series module, including new models, upgraded presets that lead to better forecast accuracy, and optimizations that speed up training & inference.

Highlights

- New models:

PatchTSTandDLinearfrom GluonTS, andRecursiveTabularbased on integration with themlforecastlibrary @shchur (#3177, #3184, #3230) - Improved accuracy and reduced overall training time thanks to updated presets @shchur (#3281, #3120)

- 3-6x faster training and inference for

AutoARIMA,AutoETS,Theta,DirectTabular,WeightedEnsemblemodels @shchur (#3062, #3214, #3252)

New Features

- Dramatically faster repeated calls to `predict...

v0.7.0

Version 0.7.0

We're happy to announce the AutoGluon 0.7 release. This release contains a new experimental module autogluon.eda for exploratory

data analysis. AutoGluon 0.7 offers conda-forge support, enhancements to Tabular, MultiModal, and Time Series

modules, and many quality of life improvements and fixes.

As always, only load previously trained models using the same version of AutoGluon that they were originally trained on.

Loading models trained in different versions of AutoGluon is not supported.

This release contains 170 commits from 19 contributors!

See the full commit change-log here: v0.6.2...v0.7.0

Special thanks to @MountPOTATO who is a first time contributor to AutoGluon this release!

Full Contributor List (ordered by # of commits):

@Innixma, @zhiqiangdon, @yinweisu, @gradientsky, @shchur, @sxjscience, @FANGAreNotGnu, @yongxinw, @cheungdaven,

@liangfu, @tonyhoo, @bryanyzhu, @suzhoum, @canerturkmen, @giswqs, @gidler, @yzhliu, @Linuxdex and @MountPOTATO

AutoGluon 0.7 supports Python versions 3.8, 3.9, and 3.10. Python 3.7 is no longer supported as of this release.

Changes

NEW: AutoGluon available on conda-forge

As of AutoGluon 0.7 release, AutoGluon is now available on conda-forge (#612)!

Kudos to the following individuals for making this happen:

- @giswqs for leading the entire effort and being a 1-man army driving this forward.

- @h-vetinari for providing excellent advice for working with conda-forge and some truly exceptional feedback.

- @arturdaraujo, @PertuyF, @ngam and @priyanga24 for their encouragement, suggestions, and feedback.

- The conda-forge team for their prompt and effective reviews of our (many) PRs.

- @gradientsky for testing M1 support during the early stages.

- @sxjscience, @zhiqiangdon, @canerturkmen, @shchur, and @Innixma for helping upgrade our downstream dependency versions to be compatible with conda.

- Everyone else who has supported this process either directly or indirectly.

NEW: autogluon.eda (Exploratory Data Analysis)

We are happy to announce AutoGluon Exploratory Data Analysis (EDA) toolkit. Starting with v0.7, AutoGluon now can analyze and visualize different aspects of data and models. We invite you to explore the following tutorials: Quick Fit, Dataset Overview, Target Variable Analysis, Covariate Shift Analysis. Other materials can be found in EDA Section of the website.

General

- Added Python 3.10 support. @Innixma (#2721)

- Dropped Python 3.7 support. @Innixma (#2722)

- Removed

daskanddistributeddependencies. @Innixma (#2691) - Removed

autogluon.textandautogluon.visionmodules. We recommend usingautogluon.multimodalfor text and vision tasks going forward.

AutoMM

AutoGluon MultiModal (a.k.a AutoMM) supports three new features: 1) document classification; 2) named entity recognition

for Chinese language; 3) few shot learning with SVM

Meanwhile, we removed autogluon.text and autogluon.vision as these features are supported in autogluon.multimodal

New features

- Document Classification

- NER for Chinese Language

- Support Chinese named entity recognition

- See tutorials

- Contributors and commits: @cheungdaven (#2676, #2709)

- Few Shot Learning with SVM

Other Enhancements

- Add new loss function

FocalLoss. @yongxinw (#2860) - Add matcher realtime inference support. @zhiqiangdon (#2613)

- Add matcher HPO. @zhiqiangdon (#2619)

- Add YOLOX models (small, large, and x-large) and update presets for object detection. @FANGAreNotGnu (#2644, #2867, #2927, #2933)

- Add AutoMM presets @zhiqiangdon. (#2620, #2749, #2839)

- Add model dump for models from HuggingFace, timm and mmdet. @suzhoum @FANGAreNotGnu @liangfu (#2682, #2700, #2737, #2840)

- Bug fix / refactor for NER. @cheungdaven (#2659, #2696, #2759, #2773)

- MultiModalPredictor import time reduction. @sxjscience (#2718)

Bug Fixes / Code and Doc Improvements

- NER example with visualization. @sxjscience (#2698)

- Bug fixes / Code and Doc Improvements. @sxjscience @tonyhoo @giswqs (#2708, #2714, #2739, #2782, #2787, #2857, #2818, #2858, #2859, #2891, #2918, #2940, #2906, #2907)

- Support of Label-Studio file export in AutoMM and added examples. @MountPOTATO (#2615)

- Added example of few-shot memory bank model with feature extraction based on Tip-adapter. @Linuxdex (#2822)

Deprecations

autogluon.visionnamespace is deprecated. @bryanyzhu (#2790, #2819, #2832)autogluon.textnamespace is deprecated. @sxjscience @Innixma (#2695, #2847)

Tabular

- TabularPredictor’s inference speed has been heavily optimized, with an average 250% speedup for real-time inference. This means that TabularPredictor can satisfy <10 ms end-to-end latency on many datasets when using

infer_limit, and thehigh_qualitypreset can satisfy <100 ms end-to-end latency on many datasets by default. - TabularPredictor’s

"multimodal"hyperparameter preset now leverages the full capabilities of MultiModalPredictor, resulting in stronger performance on datasets containing a mix of tabular, image, and text features.

Performance Improvements

- Upgraded versions of all dependency packages to use the latest releases. @Innixma (#2823, #2829, #2834, #2887, #2915)

- Accelerated ensemble inference speed by 150% by removing TorchThreadManager context switching. @liangfu (#2472)

- Accelerated FastAI neural network inference speed by 100x+ and training speed by 10x on datasets with many features. @Innixma (#2909)

- (From 0.6.1) Avoid unnecessary DataFrame copies to accelerate feature preprocessing by 25%. @liangfu (#2532)

- (From 0.6.1) Refactor

NN_TORCHmodel to be dataset iterable, leading to a 100% inference speedup. @liangfu (#2395) - MultiModalPredictor is now used as a member of the ensemble when

TabularPredictor.fitis passedhyperparameters="multimodal". @Innixma (#2890)

API Enhancements

- Added

predict_multiandpredict_proba_multimethods toTabularPredictorto efficiently get predictions from multiple models. @Innixma (#2727) - Allow label column to not be present in

leaderboardcalls when scoring is disabled. @Innixma (#2912)

Deprecations

- Added a deprecation warning when calling

predict_probawithproblem_type="regression". This will raise an exception in a future release. @Innixma (#2684)

Bug Fixes / Doc Improvements

- Fixed incorrect time_limit estimation in

NN_TORCHmodel. @Innixma (#2909) - Fixed error when fitting with only text features. @Innixma (#2705)

- Fixed error when

calibrate=True, use_bag_holdout=TrueinTabularPredictor.fit. @Innixma (#2715) - Fixed error when tuning

n_estimatorswith RandomForest / ExtraTrees models. @Innixma (#2735) - Fixed missing onnxruntime dependency on Linux/MacOS when installing optional dependency

skl2onnx. @liangfu (#2923) - Fixed edge-case RandomForest error on Windows. @yinweisu (#2851)

- Added improved logging for

refit_full. @Innixma (#2913) - Added

compile_modelsto the deployment tutorial. @liangfu (#2717) - Various internal code refactoring. @Innixma (#2744, #2887)

- Various doc and logging improvements. @Innixma (#2668)

autogluon.timeseries

New features

TimeSeriesPredictornow supports past covariates (a.k.a.dynamic features or related time series which is not known for time steps to be predicted). @shchur (#2665, #2680)- New models from StatsForecast got introduced in

TimeSeriesPredictorfor various presets (medium_quality,high_qualityandbest_quality). @shchur (#2758) - Support missing value imputation for TimeSeriesDataFrame which allows users to customize filling logics for missing values and fill gaps in an irregular sampled times series. @shchur (#2781)

- Improve quantile forecasting performance of the AutoGluon-Tabular forecaster using the empirical noise distribution. @shchur (#2740)

Bug Fixes / Doc Improvements

v0.6.2

Version 0.6.2

v0.6.2 is a security and bug fix release.

As always, only load previously trained models using the same version of AutoGluon that they were originally trained on.

Loading models trained in different versions of AutoGluon is not supported.

See the full commit change-log here: v0.6.1...v0.6.2

Special thanks to @daikikatsuragawa and @yzhliu who were first time contributors to AutoGluon this release!

This version supports Python versions 3.7 to 3.9. 0.6.x are the last releases that will support Python 3.7.

Changes

Documentation improvements

- Ray usage FAQ (#2559) - @yinweisu

- Fix missing Predictor API doc (#2573) - @gidler

- 2023 Roadmap Update (#2590) - @Innixma

- Image classifiction tutorial update for bytearray (#2598) - @suzhoum

- Fix broken tutorial index links (#2617) - @shchur

- Improve timeseries quickstart tutorial (#2653) - @shchur

Bug Fixes / Security

- [multimodal] Refactoring and bug fixes(#2554, #2541, #2477, #2569, #2578, #2613, #2620, #2630, #2633, #2635, #2647, #2645, #2652, #2659) - @zhiqiangdon, @yongxinw, @FANGAreNotGnu, @sxjscience, @Innixma

- [multimodal] Support of named entity recognition (#2556) - @cheungdaven

- [multimodal] bytearray support for image modality (#2495) - @suzhoum

- [multimodal] Support HPO for matcher (#2619) - @zhiqiangdon

- [multimodal] Support Onnx export for timm image model (#2564) - @liangfu

- [tabular] Refactoring and bug fixes (#2387, #2595,#2599, #2589, #2628, #2376, #2642, #2646, #2650, #2657) - @Innixma, @liangfu, @yzhliu, @daikikatsuragawa, @yinweisu

- [tabular] Fix ensemble folding (#2582) - @yinweisu

- [tabular] Convert ColumnTransformer in tabular NN from sklearn to onnx (#2503) - @liangfu

- [tabular] Throw error on non-finite values in label column ($2509) - @gidler

- [timeseries] Refactoring and bug fixes (#2584, #2594, #2605, #2606) - @shchur

- [timeseries] Speed up data preparation for local models (#2587) - @shchur

- [timeseries] Spped up prediction for GluonTS models (#2593) - @shchur

- [timeseries] Speed up the train/val splitter (#2586) - @shchur

[timeseries] Speed up TimeSeriesEnsembleSelection.fit (#2602) - @shchur - [security] Update torch (#2588) - @gradientsky

v0.6.1

Version 0.6.1

v0.6.1 is a security fix / bug fix release.

As always, only load previously trained models using the same version of AutoGluon that they were originally trained on.

Loading models trained in different versions of AutoGluon is not supported.

See the full commit change-log here: v0.6.0...v0.6.1

Special thanks to @lvwerra who is first time contributors to AutoGluon this release!

This version supports Python versions 3.7 to 3.9. 0.6.x are the last releases that will support Python 3.7.

Changes

Documentation improvements

- Fix object detection tutorial layout (#2450) - @bryanyzhu

- Add multimodal cheatsheet (#2467) - @sxjscience

- Refactoring detection inference quickstart and bug fix on fit->predict - @yongxinw, @zhiqiangdon, @Innixma, @BingzhaoZhu, @tonyhoo

- Use Pothole Dataset in Tutorial for AutoMM Detection (#2468) - @FANGAreNotGnu

- add time series cheat sheet, add time series to doc titles (#2478) - @canerturkmen

- Update all repo references to autogluon/autogluon (#2463) - @gidler

- fix typo in object detection tutorial CI (#2516) - @tonyhoo

Bug Fixes / Security

- bump evaluate to 0.3.0 (#2433) - @lvwerra

- Add finetune/eval tests for AutoMM detection (#2441) - @FANGAreNotGnu

- Adding Joint IA3_LoRA as efficient finetuning strategy (#2451) - @Raldir

- Fix AutoMM warnings about object detection (#2458) - @zhiqiangdon

- [Tabular] Speed up feature transform in tabular NN model (#2442) - @liangfu

- fix matcher cpu inference bug (#2461) - @sxjscience

- [timeseries] Silence GluonTS JSON warning (#2454) - @shchur

- [timeseries] Fix pandas groupby bug + GluonTS index bug (#2420) - @shchur

- Simplified infer speed throughput calculation (#2465) - @Innixma

- [Tabular] make tabular nn dataset iterable (#2395) - @liangfu

- Remove old images and dataset download scripts (#2471) - @Innixma

- Support image bytearray in AutoMM (#2490) - @suzhoum

- [NER] add an NER visualizer (#2500) - @cheungdaven

- [Cloud] Lazy load TextPredcitor and ImagePredictor which will be deprecated (#2517) - @tonyhoo

- Use detectron2 visualizer and update quickstart (#2502) - @yongxinw, @zhiqiangdon, @Innixma, @BingzhaoZhu, @tonyhoo

- fix df preprocessor properties (#2512) - @zhiqiangdon

- [timeseries] Fix info and fit_summary for TimeSeriesPredictor (#2510) - @shchur

- [timeseries] Pass known_covariates to component models of the WeightedEnsemble - @shchur

- [timeseries] Gracefully handle inconsistencies in static_features provided by user - @shchur

- [security] update Pillow to >=9.3.0 (#2519) - @gradientsky

- [CI] upgrade codeql v1 to v2 as v1 will be deprecated (#2528) - @tonyhoo

- Upgrade scikit-learn-intelex version (#2466) - @Innixma

- Save AutoGluonTabular model to the correct folder (#2530) - @shchur

- support predicting with model fitted on v0.5.1 (#2531) - @liangfu

- [timeseries] Implement input validation for TimeSeriesPredictor and improve debug messages - @shchur

- [timeseries] Ensure that timestamps are sorted when creating a TimeSeriesDataFrame - @shchur

- Add tests for preprocessing mutation (#2540) - @Innixma

- Fix timezone datetime edgecase (#2538) - @Innixma, @gradientsky

- Mmdet Fix Image Identifier (#2492) - @FANGAreNotGnu

- [timeseries] Warn if provided data has a frequency that is not supported - @shchur

- Train and inference with different image data types (#2535) - @suzhoum

- Remove pycocotools (#2548) - @bryanyzhu

- avoid copying identical dataframes (#2532) - @liangfu

- Fix AutoMM Tokenizer (#2550) - @FANGAreNotGnu

- [Tabular] Resource Allocation Fix (#2536) - @yinweisu

- imodels version cap (#2557) - @yinweisu

- Fix int32/int64 difference between windows and other platforms; fix mutation issue (#2558) - @gradientsky

v0.5.3

Version 0.5.3

v0.5.3 is a security hotfix release.

This release is non-breaking when upgrading from v0.5.0. As always, only load previously trained models using the same version of AutoGluon that they were originally trained on. Loading models trained in different versions of AutoGluon is not supported.

See the full commit change-log here: v0.5.2...v0.5.3

This version supports Python versions 3.7 to 3.9.