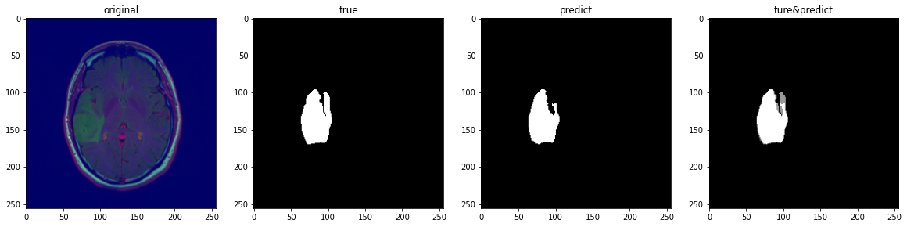

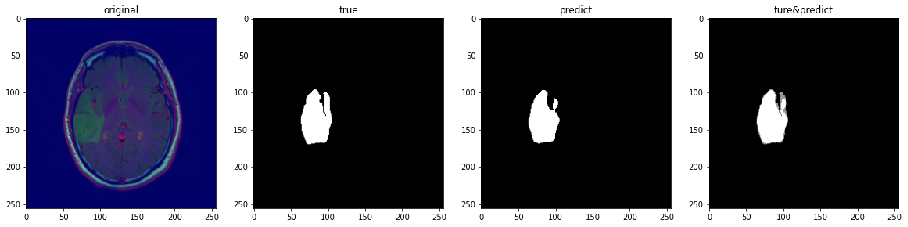

In this work, four popular deep convolutional neural networks (U-NET, DeepLab, FCN and SegNet) for image segmentation are constructed and compared. This comparison reveals the tradeoff between achieving effective segmentation and segmentation accuracy. Using deep learning, specifically convolutional neural network methods, to build and train models, brain lesions can be identified from MRI images. After the model was able to identify brain injury, the deep learning model was improved by adjusting the model structure, implementing data enhancement, and searching for optimal hyperparameters. We also elaborate on the implementation details and evaluation criteria to improve its segmentation benchmark and performance based on the original model.

- We select data from TCIA Brain MRI segmentation dataset, which is provided by the cancer image archive.

- 110 patients' brain MRI images, 3929 images in total

- Correctly labeled

- Data will not be published for privacy. You can get the dataset from kaggle.

- Pixel Accuracy

- MIoU (Intersection over Union)

TP: True positive

FP: False positive

TN: True negative

FN: False negative

- Dice Coefficient

- Fully Convolutional Networks (FCN)

- U-Net

- SegNet

- Deeplab V3

- Resnet Jump Link

- Transform Learning

- Dilated Convolution