-

Bug descriptionMy Next.js app is deployed to Vercel and uses a lambda route for a GraphQL server (apollo-server-micro) that is configured with Prisma + Nexus. Lambda cold starts on Vercel lead to slow queries that take approximately 7 seconds. I see the 7 seconds on the private deploy with project name "blogody". A typical cold start signature looks as follows: x-vercel-id | cdg1::iad1::28qc9-1622127003386-1cc4995040e3 As I cannot share this repo publicly, I made a smaller example that still shows a smaller but significant cold start times of approximately 2,5 seconds. I have not managed to find the influencing factors and I hope Vercel can shed some light on it. Here is the deploy output for the serverless functions: I see the cold starts after approx. 10 minutes inactivity, but that could vary. I put some simple timestamps into the app, both on the client and the server. From those timestamps, you can see that in the case of a cold start the total query time is governed by the waiting time between query initiation and endpoint function invocation ( Some screenshots from the example: How to reproduce

Expected behaviorI know that cold starts cannot be fully eliminated, but cold start times of 2 - 7 seconds are a problem for me. I can accept cold start time of roughly 1 second. Thus, I expect the following help from this issue:

I will also opened an issue @prisma to see if the issue is amplified by that stack. Additional informationYou can find I'd be happy to provide more information if needed. |

Beta Was this translation helpful? Give feedback.

Replies: 39 comments 35 replies

-

|

@styxlab The issue you experienced is caused by the database (in this case Nexus) not being optimised for serverless connections. Database connections cannot be shared between serverless invocations between cold boots. Therefore, each time your serverless function is called (while cold), a new database connection will need to be established. You can get around this by adding poolers between your database and the serverless function or by switching to a serverless friendly database. You can find more information about this over at https://vercel.com/docs/solutions/databases#connecting-to-your-database. |

Beta Was this translation helpful? Give feedback.

-

|

Thanks @williamli for looking into this issue. Unfortunately, connection pooling cannot explain the issue, I ruled that out already. Why? because I took the database out of the example, there is not a single call to a database! In the example I simply return mocked data in the GraphQL resolver, that's where a real world example would make a request to the database. Nexus is not a database it is a GraphQL schema generator, and Prisma is a ORM or model mapper. I include them in the example, because they have an influence on the problem (maybe through lambda function bundle size, I don't know). |

Beta Was this translation helpful? Give feedback.

-

|

Just for the record, I enhanced the reproduction example with

|

Beta Was this translation helpful? Give feedback.

-

|

Here are some additional findings:

As Vercel infra is basically a black box, I would very much appreciate some more insight on what determines the cold starts and what can be done to reduce it (both on user and Vercel land). It would be also interesting to know why warming does not help in all cases. |

Beta Was this translation helpful? Give feedback.

-

|

If you develop with plain AWS, you can significantly decrease cold start time by increasing function memory size (will also give you more virtual cpu cores). I think you can also change the memory setting in vercel. |

Beta Was this translation helpful? Give feedback.

-

@styxlab I'm having this issue as well, my backend uses Prisma + Nexus + Vercel. I'm using connection pooling, so I know it's not what @williamli mentioned in his comment. Have you made any more progress on this issue? |

Beta Was this translation helpful? Give feedback.

-

|

@nhuesmann This is still an unsolved problem for me and that's why I still run my api endpoints on a digitalocean droplet (everything else on Vercel). The best I could do with Vercel lambda was to call the endpoints every 3-4 minutes (warming), but that didn't reliably help all the time. It's also difficult to test that from different regions. I am planning to write an in-depth blog article about my findings but do not yet know when I have the time for this. |

Beta Was this translation helpful? Give feedback.

-

|

We're (https://github.com/gooditcollective) quite interested in this as well, since we build all of our clients projects on Vercel. Specifically, we use Graphql function on plain nodejs Vercel environment, made with Apollo Server. Even completely minimal solution (with no external connections to databases and similar things, with no extra code dependencies, just plain Apollo Server initialisation and single http handler made with micro) boots up in about 1.5-2 seconds. We'd love to find a way to make it reasonable (I guess, 200-500ms would be already satisfactory). Is there any suggestions or ideas from Vercel's team or community, I wonder. We'll try limiting function memory size, but I reckon that will have small effect if any. Rewarming is something that we will do also, but this feels like a broken solution and is unreliable. Is there anything we can try? |

Beta Was this translation helpful? Give feedback.

-

|

@neoromantic I don't want to get in the way of a reply from @vercel, but it's good to see you are reporting figures that correspond very well with my own observations (am also using apollo-micro and tested with empty resolvers - no db connection). I am also very interested in moving my temporary solution (graphql API endpoints on DO) back to Vercel, but the performance difference is really huge as I am getting <~ 100ms consistently without worrying about cold startups. I am a bit puzzled as to why this topic does not get more attention - it seems to me that all apps using serverless functions would run into that issue sooner or later. In any case, a real solution would probably have to come from AWS, so maybe it's better addressed there? |

Beta Was this translation helpful? Give feedback.

-

If I remember correctly, Vercel's position and strategy is that their platform is very much cache-oriented. So it's not the main case for vercel to be a hosting for real-time api functions, but to generate a response and cache it so it can be delivered statically. Personally, I want to consider solutions like fly.io, which allows to have multi-zone setup for gql server and redis cache backend, for example. But since I've adopted vercel (called zeit then) since very first versions and I adore their ideology and wonderful support. So I'm very hopeful that at least we would get an understanding on how to manage cold boot times. |

Beta Was this translation helpful? Give feedback.

-

|

I am experiencing 10s+ cold starts with 255kb function, it quite a deal breaker |

Beta Was this translation helpful? Give feedback.

-

|

@timuric This sounds a bit high, with a 255kb function I would expect cold start times of ~ 1 second. Did you make the following checks?

I missed the latter check initially, that's why I ended up with ~ 7 secs, because individual cold starts accumulated. Once you understand your access pattern, you can optimize. However, the barrier of ~ 1 second remains, which is still a big issue for me. |

Beta Was this translation helpful? Give feedback.

-

|

Just wanted to chime in here to confirm that we are also running into this exact same issue with, in fact, the same stack causing this (apollo-server-micro with nexus). As the OP already mentioned, this has nothing to do with the database as we also ruled that out entirely (returning stubbed data performs exactly as poorly as it does with a database connection). |

Beta Was this translation helpful? Give feedback.

-

|

We are experiencing similar issues, though cold start times are shorter for us, at around 1.5s. Something that surprised me was that for NextJS (which is what we use), API endpoints are bundled together up to a size of 50mb. Therefore, despite having a number of separate API endpoints, they are actually bundled together with size ~30mb. This is to reduce the number of cold starts and keep things warm. However, when there is a cold start (which happens quite frequently, as the API does not experience high traffic), it is long enough to cause issues for our application. I haven't tried creating a small endpoint to keep it warm yet, but will try that next and see what affect it has. |

Beta Was this translation helpful? Give feedback.

-

|

A solution to the problem: https://vercel.com/docs/concepts/functions/edge-functions ? |

Beta Was this translation helpful? Give feedback.

-

|

@piotrpawlik: I haven't noticed longer cold start times after 12.1.0. However, I am also experiencing accumulating cold start issues with Unfortunately, this is in addition to the cold start time of ~ 1 sec of the calling lambda function itself (hence accumulating). With some inevitable network latency, the cold start of a revalidate endpoint will take approx. 3 seconds in total. I experimented with warming, but you have to trigger every edge server worldwide, so this is not a practical workaround. I am sad to say, but cold start issues are the biggest bummer with Vercel/AWS lambda. |

Beta Was this translation helpful? Give feedback.

-

|

currently having this problem |

Beta Was this translation helpful? Give feedback.

-

|

We are experiencing long cold starts for Next.js SSR, the resulting bundles are roughly 260B. From the logs we see Init Duration of 4s - 5s, which is far from acceptable dynamic web response time. Is it possible to increase memory size for SSR functions? |

Beta Was this translation helpful? Give feedback.

-

|

This has become a completely untenable issue for our application. API endpoints are basically useless on Vercel because of the cold start issue...why is this not solved? |

Beta Was this translation helpful? Give feedback.

-

|

+1 API endpoints take way too long on a cold start. Will have to find a different solution. It's quite sad the lack of response here from the Vercel team. I also find it strange that my API function size is 30MB+ even though I only have a couple small functions (and @next/bundle-analyzer is reporting them at 200kb...). I love Vercel but this is super disappointing. |

Beta Was this translation helpful? Give feedback.

-

|

Having the same issue with signin/signup API routes - spent a while adjusting email generation, trialling templating, switching from SMTP to restAPI for mail, etc etc, but discovered now that although I see ~8s for the first test, if I log out and straight back in, second attempt is much faster (<~1s?, which is fine for me). So guess my problem is not my emailing, but the cold start behaviour? (reading above, it seems like I might significantly reduce the 8s by trying to flatten out API requests down to a single endpoint, not sure that will be ideal for DRY but worth it if it cuts 8->2 seconds, which would be a bit annoying but no longer terrible). Maybe the edge functions will solve my problem, will have to try and see if I can rewrite using those (functions are small, so hopefully will fit in the 1MB limit). |

Beta Was this translation helpful? Give feedback.

-

|

I wrote up ways to debug and detect your root issue with Serverless Function performance decreases. Edit: Since this has been posted, Prisma has done significant work to improve cold starts (commonly mentioned here as being used in Vercel Functions). Ensure you are on both the latest versions of Prisma and Next.js. Next.js 14 also had cold start improvements, including up to 80% small functions in some instances. |

Beta Was this translation helpful? Give feedback.

-

|

Just as a follow-up, we ended up switching to a simple VPS for hosting our next application and its database, away from Vercel (and also away from planetscale). I loved the super simple and straightforward integrations and optimizations that Vercel provided - but this issue was just untenable and so we had to switch. The additional bonus is that the VPS hosting is a lot less expensive - so there's that. |

Beta Was this translation helpful? Give feedback.

-

|

+1 we also ended up moving away from Vercel and onto DO. The cold startup times were just not acceptable for our application (or any application imo) and we simply do not have time to try and debug a black box. It would be great if Vercel would allow us to see how the serverless functions are bundled so we could try and figure out why the functions are so huge. I did some experimenting and a simple function that just returned a 200 status was 30MB+ somehow (I even made sure the function was bundled separately via config). I realize this was probably some kind of error or misconfiguration on my end, but there is no way to debug it and fix it. I would easily move back to Vercel if you guys provided a way to either a) have my API routes not as serverless functions at all or b) provide some way for us to debug these kind of issues. |

Beta Was this translation helpful? Give feedback.

-

|

I also hit this problem. My reason for using I have chosen not to address the cold start issue itself but I am able to side-step the problem by switching to Another way to reduce the impact of cold-starts would be SWR - using caching and usage of stale data to reduce the time to displaying useful content, with the fresh data loading in automatically when it's ready. This doesn't help if there's nothing in the cache or if stale data has no value, but it's another good option for my use case where I don't expect the data to change often. |

Beta Was this translation helpful? Give feedback.

-

|

On AWS, we usually just provision a little concurrency to avoid the pure cold starts. Any reason why vercel couldn't enable provisioned concurrency to help this issue? |

Beta Was this translation helpful? Give feedback.

-

|

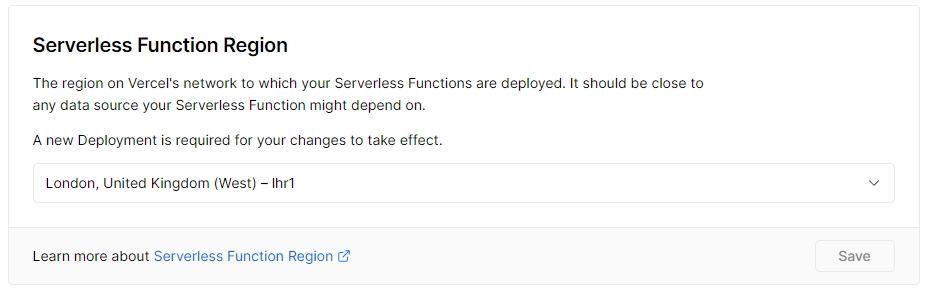

I just wanted to share my experience and research - As I'm confused to why this problem isn't wide-spread. I want to start by saying I love the work @leerob and the rest of the Vercel team are doing... But this has caused my some real headaches. And I've sunk a lot of time into trying to find a solution. So if there's any advice which can help alleviate this issue - It would be most welcome! Account LoginInitial Request 🐌This is the first interaction a user would have with the application - It's the first API call which is made. As you can see, when the site has been idle the first request takes a fair bit of time. Subsequent Request 🚀Perhaps this is a Database / Prisma / tRPC Issue?

Lightweight "health-check" request

health-check.tsQuery "lightweight" API endpoint roughly every 10 minsAttempted SolutionsChoose the correct region for your functionsI am based in the UK, so I set the Serverless Function Region as follows: Choose smaller dependencies inside your functionsThere are zero dependencies in the "health check" function Use proper caching headersWhilst this may improve the situation for repeat visitors - It doesn't fix the initial "cold start" Migrate to "Always-on" (Heroku) ✅This does fix the issue - The response times are 1/10th, but there are tradeoffs as discussed here I don't want to migrate to Heroku. I like Vercel, but I'm sure you can appriciate this issue makes it untenable Open QuestionsFirst Load JS shared by all

|

Beta Was this translation helpful? Give feedback.

-

|

Are they any guidelines on what bundle size to aim for to get a reasonable cold start time? 330 to 350k seems to consistently result in 6 to 6.5 seconds or so. 😭 Can Vercel do anything here? Are Vercel doing anything? (I've read the guide) Thanks |

Beta Was this translation helpful? Give feedback.

-

|

@leerob I think there are two feature requests needed to alleviate this issue.

This will let all of us stay on Vercel 100% and not have to move to DO, etc., for hosting a non-serverless API

|

Beta Was this translation helpful? Give feedback.

-

|

Adding my name to the pile of people having problems. I'm experiencing sluggish cold starts for API routes as well. I've set up a repo to experiment, https://github.com/perenstrom/database-timings-test, with two tests. The frontend makes five requests in a row, printing the timings of all of them. Everything is hosted in Frankfurt (API functions, data proxy, database). Build output

Database exampleNextJS frontend -> NextJS API route -> Prisma Data Proxy -> Render Postgres DB The code looks as follows. // pages/api/films

import { NextApiRequest, NextApiResponse } from 'next';

import { prismaContext } from 'lib/prisma';

const films = async (req: NextApiRequest, res: NextApiResponse) => {

if (req.method === 'GET') {

const startTime = performance.now();

const result = await prismaContext.prisma.film.findMany({});

const timing = performance.now() - startTime;

return res.json({ data: result, timing });

} else {

res.status(404).end();

}

};

export default films;The database example shows the following timings: The "database" timings are the timings as seen in the code above, i.e. time for the Prisma call, and the "total" timing is measured by the client, the amount of time the call to the API route takes. As you can see, the first request is really slow, and any subsequent calls are down to a reasonable time. Function logs in the Vercel Dashboard shows the following time for the first call, which matches the measured times in the frontend. I did a test without running Prisma Data Proxy, and that was even worse. So the proxy at least did something, but it's clear that it's cold starts that are the main issue. Simple exampleThe simple example does what many above have done, just return some JSON from the API route: import { NextApiRequest, NextApiResponse } from 'next';

const metrics = async (req: NextApiRequest, res: NextApiResponse) => {

if (req.method === 'GET') {

return new Promise((resolve) => {

res.status(200).json({ data: 'Hello world' });

resolve('');

});

} else {

res.status(404).end();

}

};

export default metrics;This gives the following timings. Same as many before me, the cold start for this simple, simple function is still over 3 seconds.

Any and all help is appreciated. If this is not solvable, I guess I have to do the same as people above, pay for a server somewhere (I REALLY don't want to migrate away from Vercel, I do despise devops.). |

Beta Was this translation helpful? Give feedback.

I wrote up ways to debug and detect your root issue with Serverless Function performance decreases.

Edit: Since this has been posted, Prisma has done significant work to improve cold starts (commonly mentioned here as being used in Vercel Functions). Ensure you are on both the latest versions of Prisma and Next.js. Next.js 14 also had cold start improvements, including up to 80% small functions in some instances.