New issue

Have a question about this project? Sign up for a free GitHub account to open an issue and contact its maintainers and the community.

By clicking “Sign up for GitHub”, you agree to our terms of service and privacy statement. We’ll occasionally send you account related emails.

Already on GitHub? Sign in to your account

kubeadm init does not configure RBAC for configmaps correctly #907

Comments

|

@chuckha If I remember well |

|

@chuckha I completed join successfully on a cluster with all the components build from master + release number forced to v1.11.0

So IMO:

The part still to investigate is

|

|

Yes, this must be a version issue. I didn't set the version when I built so the binaries all think they are 1.12.0+ but I installed and forced kubeadm to use v1.11. This resulted in and then when joining: I will rebuild the binaries and force them to the correct version and try again. |

|

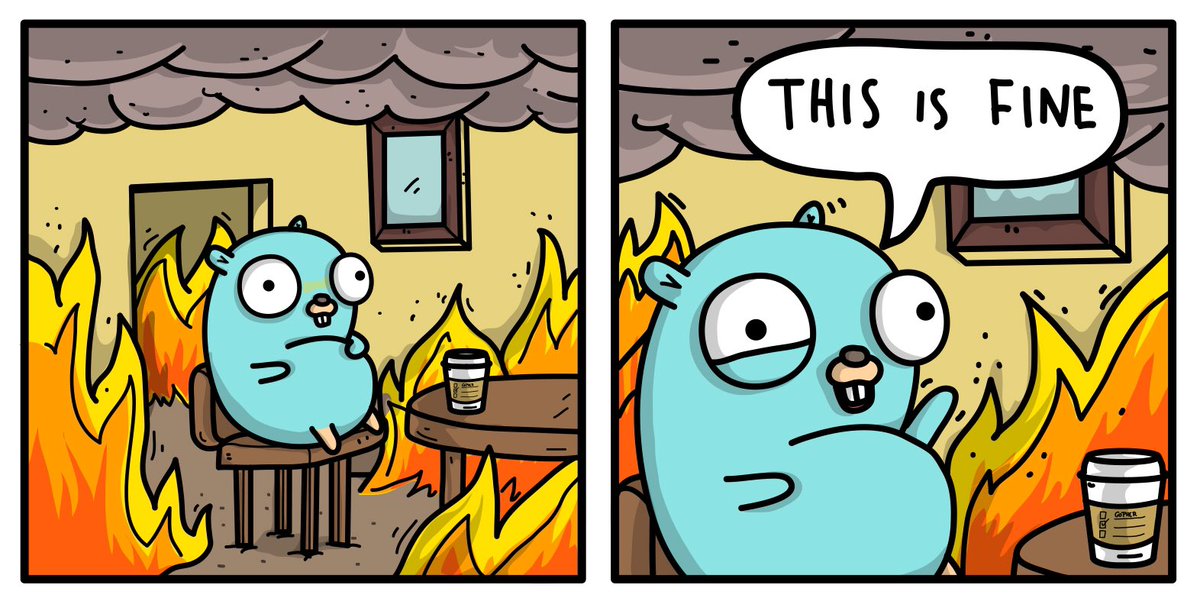

Everything is fine when versions of kubelet and kubeadm match. Closing in favor of a less urgent fix regarding inconsistency (maybe intentional) between writing config map and fetching configmap. |

|

I'm not using kubeadm init, but calling the phases individually. There is no configmap in kube-system nor permissions to properly set it up. What phase is this? |

|

@drewwells I have encountered the same problem as you did. I am running the phases individually, and there are no config mpas. Have you found the solution? I must also mention, all components are 1.11.4 This goes further. I bootstrap a cluster with kubeadm init, and I do have the correct config map in place now: On the node: notice the weired stuff? It's trying to fetch config map in version 1.12 Apparently, that all makes since, if you check the version of the kubelet! I have had kubelet version 1.12.2. |

|

I'm experiencing the same problem, except one version newer. On the master: What, where did that server version 1.13.0 come from? I didn't install that. Anyway, before I ran kubeadm init on this VM, I cloned it, so I have another one ready to be another node in the cluster. Because it's a clone, it also has 1.12.1. When I try to join it: And why can't it get the kubelet-config-1.12 configmap? 'Cause there isn't one. Back on the master: |

|

@brianriceca : facing exactly same issue as like you...any solution for this.. Master:ram@k8master1: ram@k8master1: Worker Nodes: root@k8worker1:~# kubeadm join 10.0.0.61:6443 --token xjxgqa.h2vnld3x9ztgf3pr --discovery-token-ca-cert-hash sha256:7c18b654b623ee84164bb0dfa79409c821398f1a968843446af525ec72e0fdad

[discovery] Trying to connect to API Server "10.0.0.61:6443"

[preflight] Some fatal errors occurred: |

|

same as @brianriceca and @ramanjk. Versions of kubadm and kubelet are 1.12.1 on both nodes, the |

|

D'oh! What I didn't yet know is that kubeadm always downloads the latest version of the Kubernetes control plane from gcr.io unless you tell it otherwise. So if I want to install 1.12.1 even when 1.13 is available, I need to do |

|

I deleted both nodes and tried again using version |

|

I am suffering the same bug, while (apparently) using the same version in the seeder as in the node. On the seeder (after initialization): On the node: For some reason it seems it is looking for After checking the Is the configmap name derived from this? |

|

@inercia the configmap name is derived from the kubelet version. See my link above. |

|

Thanks for the clarification @oz123. I was wondering what would happen on updates. For example,

|

|

I'm seeing this issue installing k8s 1.13.1 with a matching kubeadm version, but isolated to kube-proxy: If I create a workaround RoleBinding manually, I'm able to join nodes. Is a EDIT: Also found that running |

|

This worked for me: Definitely check your version of kubeadm and kubelet, make sure the same version of these packages is used along all of your nodes. Prior to installing, you should "mark and hold" your versions of these on your hosts: check your current version of each: check kubeadm if they're different, you've got problems. You should reinstall the same version among all your nodes and allow downgrades. My versions in the command below are probably older than what is currently out, you can replace the version number with some more up to date, but this will work: then, once they're installed, mark and hold them so they can't be upgraded automatically, and break your system |

Is this a BUG REPORT or FEATURE REQUEST?

BUG REPORT

Versions

kubeadm version (use

kubeadm version): "v1.12.0-alpha.0.957+1235adac3802fd-dirty"What happened?

I created a control plane node with

kubeadm init. I rankubeadm joinon a separate node and got this error message:What you expected to happen?

I expected

kubeadm jointo successfully finishHow to reproduce it (as minimally and precisely as possible)?

As far as I can tell, run

kubeadm initandkubeadm joinon another node. I have a lot of extra code/yaml that shouldn't be influencing the config map (required for happy aws deployment). But if this turns out to not be reproducible I will provide more indepth instructions.Anything else we need to know?

I think also

kubeadm joinandkubeadm initare naming the config maps inconsistently. Theinitcommand uses thekubernetesVersionspecified in the config file and thejoincommand uses the kubelet version for the name of the config map (e.g. kubelet-config-1.1). This is fine unless you have mismatched versions then it is not fine.The

initcommand makes RBAC rules for anonymous access to config maps in thekube-publicnamespace but it doesn't seem to put the kubelet config in the public namespace and thus the node joining has no access to it.The text was updated successfully, but these errors were encountered: