Replies: 3 comments 17 replies

-

|

Before the DAT we should build a recent version of Flux for this system (not sure if we should install into a shared location as well), choose the versions of MPI we're going to test, and ensure it can bootstrap without issues on the target system under Slurm. Would also make sense to test the time it takes Flux to bootstrap a new instance of Flux in addition to the MPI launch timing? |

Beta Was this translation helpful? Give feedback.

-

|

Here's a script to drive the MPI scale testing, In this DAT we can basically run this under #!/bin/bash

NNODES=$(flux resource list -no {nnodes})

NCORES=$(flux resource list -no {ncores})

CPN=$((${NCORES}/${NNODES}))

printf "MPI scale testing on ${LCSCHEDCLUSTER}\n"

printf "TIME: $(date -Is)\n"

printf "INFO: $(flux resource info)\n"

printf "FANOUT: $(flux getattr tbon.fanout)\n"

printf "\n"

printf " NODES NTASKS INIT BARRIER FINALIZE TOTAL\n"

seq2()

{

local start=$1

local end=$2

local printend=1

while [[ $start -lt $end ]]; do

printf "$start\n"

[[ $start = $end ]] && printend=0

((start*=2))

done

[[ $printend = 1 ]] && printf "$end\n"

}

flux mini bulksubmit --watch --progress --quiet --nodes={0} --tasks-per-node={1} \

--exclusive \

--env=FLUX_MPI_TEST_TIMING=t \

./t/mpi/hello \

::: $(seq2 1 ${NNODES}) \

::: $(seq2 1 ${CPN})

# vi: ts=4 sw=4 expandtabLet me know if powers of 2 scaling isn't the right approach. $ srun --pty -N8 ./src/cmd/flux start -o-Stbon.fanout=0 ./mpi-scale.sh

MPI scale testing on mammoth

TIME: 2022-10-19T10:38:20-0700

INFO: 8 Nodes, 1024 Cores, 0 GPUs

FANOUT: 0

NODES NTASKS INIT BARRIER FINALIZE TOTAL

1 1 0.120408295 0.000017530 0.026350396 0.146776392

1 2 0.247044578 0.000021280 0.027165694 0.274231694

1 8 0.255578820 0.003441866 0.022947635 0.281968421

1 16 0.300907664 0.006436076 0.023918238 0.331262128

1 32 0.353205447 0.015671256 0.051539656 0.420416549

1 4 0.515359992 0.000083761 0.016126541 0.531570464

2 2 0.124149830 0.005388638 0.177004715 0.306543333

1 64 0.342178547 0.034258889 0.114106157 0.490543783

2 4 0.152538355 0.002849584 0.184196495 0.339584594

2 8 0.145661494 0.001002305 0.173005612 0.319669571

1 128 0.695548800 0.072997695 0.201960088 0.970506764

2 16 0.161562866 0.000070312 0.177473684 0.339107022

2 32 0.210315840 0.000649573 0.202386340 0.413351863

2 64 0.303383488 0.005213797 0.229790180 0.538387616

2 128 0.515357182 0.026913300 0.272929648 0.815200291

4 4 0.148437575 0.000023210 0.190720598 0.339181533

4 8 0.154291603 0.001456992 0.181385187 0.337133942

2 256 1.347883719 0.025394977 0.368588671 1.741867507

4 16 0.157359998 0.000026741 0.173216745 0.330603614

4 32 0.180073968 0.002974244 0.191913165 0.374961557

4 64 0.228568515 0.013123602 0.211228428 0.452920705

4 128 0.329307955 0.018139371 0.239887321 0.587334807

4 256 0.865110944 0.022677813 0.275205535 1.162994442

4 512 1.639541113 0.128465618 0.397119047 2.165125948

8 8 0.168247919 0.004781156 0.180335400 0.353364655

8 16 0.154139966 0.004597001 0.177126766 0.335863874

8 32 0.160068415 0.007155382 0.181946403 0.349170350

8 64 0.186454171 0.022474939 0.184045723 0.392974994

8 128 0.224943759 0.047555480 0.245997619 0.518497009

8 256 1.649888681 0.094998605 0.244259213 1.989146669

8 512 0.868126479 0.105073766 0.288091267 1.261291642

8 1024 2.798268776 0.089336412 0.436704179 3.324309537

PD:0 R:0 CD:32 F:0 │██████████████████████████████████████████│100.0% 0:00:22 |

Beta Was this translation helpful? Give feedback.

-

|

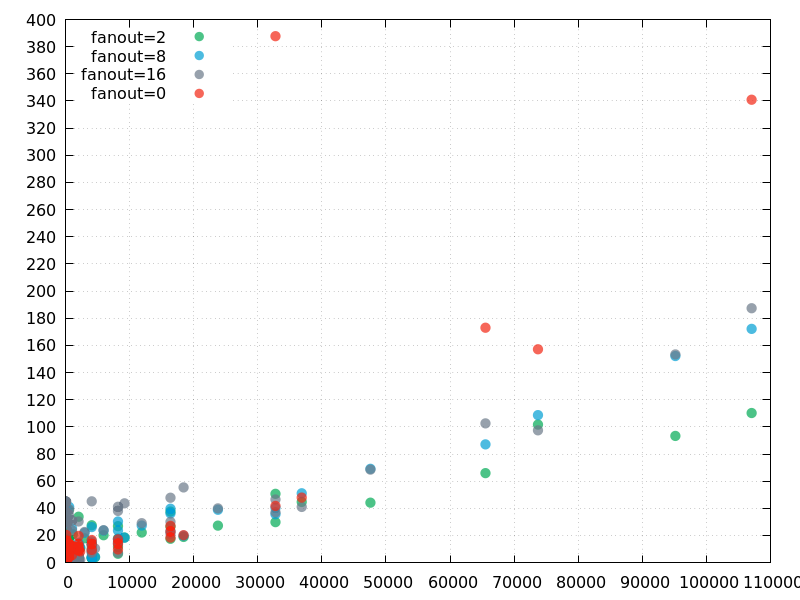

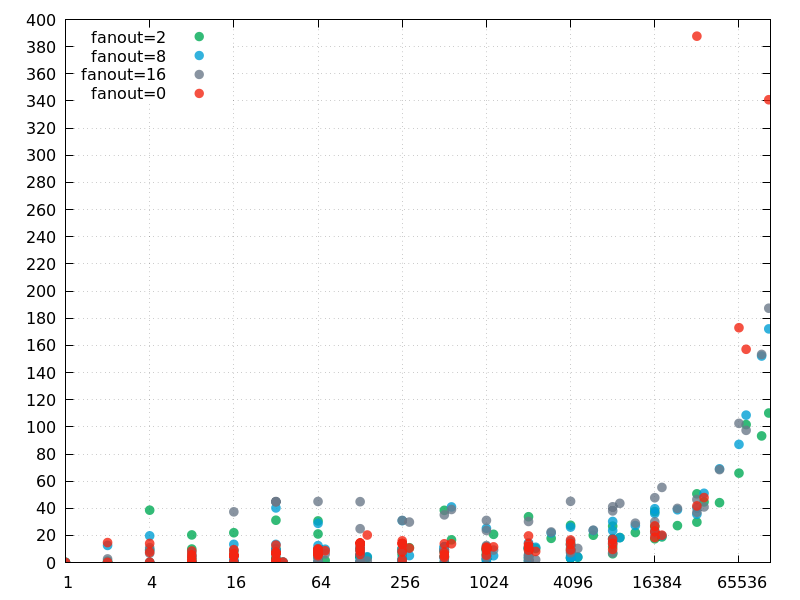

We got through most of the MPI scale testing with parent instance fanouts of 2 (the current default), 8, 16, and 0 (all ranks are children of rank 0). We didn't have time to get through all of the fanout=0 tests. We were able to get data for one run for each fanout value at the maximum number of tasks: 107064 tasks across 2974 nodes. It would be nice to have another chance to run the fanout=0 case just to verify the above is not an outlier, however, looking at the plots of the raw data (for |

Beta Was this translation helpful? Give feedback.

-

We have Flux scaling test opportunity scheduled for Wed Oct 19 on quartz (~2970 nodes). Let's discuss the plan and the results here, and we can spin off issues for any weak spots we discover.

Quartz is running TOSS3 (EL7 based). We don't support

flux-securityon this OS so we can't test a multi-user system instance. However, we should be able to launch a large Flux instance as a slurm job and put it through its paces.Ideas:

tbon.fanoutin the enclosing instance (64, 128, 256)Other thoughts?

Beta Was this translation helpful? Give feedback.

All reactions