-

-

Notifications

You must be signed in to change notification settings - Fork 805

Using aiolimiter to rate limit requests does not work with httpx #1933

Replies: 3 comments · 6 replies

-

|

Hi! I'm the author of aiolimiter, I found this discussion by chance. This is a very curious case, and I don't think httpx is to blame here. I think something else is wrong on your stack somewhere, as using a bounded semaphore makes no difference to the way httpx is called. At best it introduces a few microseconds delay too the first call to I've adapted your script to illustrate that there is no real difference: import asyncio

from time import monotonic

from typing import AsyncContextManager

from aiolimiter import AsyncLimiter

from aiohttp import ClientSession

ref = monotonic()

class anullcontext:

async def __aenter__(self):

return None

async def __aexit__(self, *excinfo):

return None

async def task(x: int, limiter: AsyncLimiter, sema: AsyncContextManager):

async with sema:

async with limiter:

print(f"{monotonic() - ref:.5f}: request")

async def main(num_requests: int, use_sema: bool, rate: float = 20, period: float = 1):

limiter = AsyncLimiter(rate, period)

sema = asyncio.BoundedSemaphore(100) if use_sema else anullcontext()

tasks = [

asyncio.create_task(task(x, limiter, sema))

for x in range(num_requests)

]

await asyncio.gather(*tasks, return_exceptions=True)

if __name__ == "__main__":

import argparse

parser = argparse.ArgumentParser()

parser.add_argument('-s', dest='use_sema', action='store_true', help='Use a bounded semaphore')

parser.add_argument(

'-r', dest='rate', type=float, default=20, help='Maximum number of requests in a time period'

)

parser.add_argument(

'-p', dest='period', type=float, default=1, help='Rate limit time period'

)

parser.add_argument('num_requests', nargs='?', default=50, type=int)

args = parser.parse_args()

asyncio.run(main(args.num_requests, args.use_sema, args.rate, args.period))This just prints out the time delta for when each call to httpx or aiohttp would have been made, and I made the rate limiter parameters and toggling the bounded semaphore accessible from the command line. I made the bounded semaphore limit a multiple of the rate limit, but any value equal to or greater than the bucket capacity would work. Running this script using a more limited rate and request count shows there is no real difference between using a bounded semaphore here: ./demo.py -r 5 10

0.000165: request

0.000237: request

0.000254: request

0.000268: request

0.000280: request

0.201349: request

0.405060: request

0.606129: request

0.806744: request

1.009209: request

./demo.py -r 5 10 -s

0.000239: request

0.000323: request

0.000343: request

0.000359: request

0.000374: request

0.201835: request

0.403348: request

0.607522: request

0.809956: request

1.014539: requestThe timing differences come down to normal fluctuations in execution times on a multitasking system; there are never more than a few microseconds difference between the two scenarios, and can go both ways. Running this with larger numbers makes no difference either. |

Beta Was this translation helpful? Give feedback.

All reactions

-

|

First of all: I can't reproduce this issue on my Macbook Pro (16" 2019 model, Intel processor) running macos 11.6, using Python 3.9 and the version ranges you specified (sanic 21.9.3, httpx 0.20.0). There is no difference in performance with or without a bounded semaphore. So this is something unique to your OS, which you didn't specify unfortunately.

That's not correct, the bounded semaphore has no influence on the number of parallel requests. The number of parallel requests is entirely managed by the limiter, in that the And because the semaphore has a higher limit than the total number of tasks running, it doesn't affect anything that could directly affect I note that the semaphore implementation here is essentially a noop, as the With everything else being equal, running Without

|

Beta Was this translation helpful? Give feedback.

All reactions

-

|

Hi, thanks for your detailed explanation. Here are two videos (uploaded to youtube) on how it looks on my machine: I am running Ubuntu 20.04 with Kernel 5.13.0-30-generic on a Lenovo T480s: I also created an example repository: https://github.com/nebularazer/httpx-aiolimiter Some more context on how i ran into this problem: While testing on our development google domain, with way less users and groups (~500), everything was fine. My understanding was:

But this is obviously not the case as i understand now and you explained in great detail. Thank you for taking the time and effort you put into this. 👍 |

Beta Was this translation helpful? Give feedback.

All reactions

-

|

@tempelkim also ran the example and observed a similar behavior as shown in the videos. He is on a MacBook Pro (15-inch, 2016), 2,6 GHz Quad-Core Intel Core i7, Big Sur (11.6.3) with 16GB RAM |

Beta Was this translation helpful? Give feedback.

All reactions

-

|

The videos are helpful in that they show the exact command-line options you use. Without that detail I was assuming you were not setting a higher request count. When setting the request count high I do see the slowdown, so using When entering the context of a limiter, the limiter will:

When you add a bounded semaphore what happens in that the number of concurrent of futures blocked on First of all, I've updated the demo script to

This will let us see a bit more of what is going on, especially with what asyncio and httpx are busy with. Click to view updated scriptimport asyncio

import logging

import math

from typing import AsyncContextManager, Union

from aiolimiter import AsyncLimiter

from aiohttp import ClientSession

from httpx import AsyncClient

logger = logging.getLogger("main")

class TaskNameFilter(logging.Filter):

def filter(self, record):

try:

task = asyncio.current_task()

except RuntimeError:

task = None

record.taskname = task.get_name() if task is not None else "~"

return True

class anullcontext:

async def __aenter__(self):

return None

async def __aexit__(self, *excinfo):

return None

async def task(limiter: AsyncLimiter, sema: AsyncContextManager, client: AsyncClient):

async with sema:

logger.debug(">> sema")

async with limiter:

logger.debug(">> limiter")

await client.get("http://localhost:8000")

logger.info("request made")

logger.debug("<< limiter")

logger.debug("<< sema")

async def main(

num_requests: int,

use_sema: bool,

rate: float = 20,

period: float = 1,

client_factory: type[Union[AsyncClient, ClientSession]] = AsyncClient,

):

limiter = AsyncLimiter(rate, period)

sema = asyncio.BoundedSemaphore(100) if use_sema else anullcontext()

client = client_factory()

width = 1 + int(math.log10(num_requests))

tasks = [

asyncio.create_task(task(limiter, sema, client), name=f"#r{x:0{width}d}")

for x in range(num_requests)

]

await asyncio.gather(*tasks, return_exceptions=True)

if __name__ == "__main__":

import argparse

parser = argparse.ArgumentParser()

parser.add_argument(

"-s", dest="use_sema", action="store_true", help="Use a bounded semaphore"

)

parser.add_argument("-u", dest="use_uvloop", action="store_true", help="Use uvloop")

parser.add_argument(

"-a",

dest="use_aiohttp",

action="store_true",

help="Use aiohttp instead of httpx",

)

parser.add_argument(

"-d",

dest="debug",

action="store_true",

help="Enable asyncio logging and debugging",

)

parser.add_argument(

"-r",

dest="rate",

type=float,

default=20,

help="Maximum number of requests in a time period",

)

parser.add_argument(

"-p", dest="period", type=float, default=1, help="Rate limit time period"

)

parser.add_argument("num_requests", nargs="?", default=50, type=int)

args = parser.parse_args()

factory = ClientSession if args.use_aiohttp else AsyncClient

if args.use_uvloop:

import uvloop

asyncio.set_event_loop_policy(uvloop.EventLoopPolicy())

logging.basicConfig(

level=logging.DEBUG if args.debug else logging.INFO,

format="(%(relativeCreated)06d %(taskname)s) %(levelname)s:%(name)s:%(message)s",

)

filter = TaskNameFilter()

for handler in logging.getLogger().handlers:

handler.addFilter(filter)

logging.info("Running test with %r", args)

asyncio.run(

main(

args.num_requests,

args.use_sema,

args.rate,

args.period,

client_factory=factory,

),

debug=args.debug,

)Using the Problematic run, no semaphore, demo.py -d 500With semaphore, demo.py -d 500 -sUsing aiohttp, no semaphore, demo.py -d 500 -aUsing aiohttp and semaphore, demo.py -d 500 -saThe logs for runs without a bounded semaphore start with 500 '>> sema' lines, where the nullcontext is entered directly. With the For each of these lines that is not followed immediately by the The first think that strikes me is that there are a lot of That is not the root cause however, but it does show the whole task callstack up to that point has been delayed already, before any network calls are made. So, I looked more into I note that aiohttp uses the On the whole, I hit a dead end here, as the script leaves the httpx timeout of 5 seconds at the default, so the shorter callback times set by the limiter should not be interfering with the ones set up from the httpcore codebase. So, all I can do at the moment is confirm there is a performance issue with HTTPX when used in combination with I'll continue to dig and see if this can be mitigated in aiolimiter. |

Beta Was this translation helpful? Give feedback.

All reactions

-

👍 2

-

|

Hi, thank you for going above and beyond with this investigation ⭐ 👍 If anyone else wants to have a look, they can just clone this repository, i updated it with your latest changes. |

Beta Was this translation helpful? Give feedback.

All reactions

-

|

@nebularazer is this still relevant? I believe I have found the issue and I've just released a rate limiting library that supposedly doesn't suffer from it. Would you mind testing using my new library as well? API is almost similar. On local testing it completely fixes it. |

Beta Was this translation helpful? Give feedback.

All reactions

-

|

Is this fixed? |

Beta Was this translation helpful? Give feedback.

All reactions

-

👎 1

-

Hi,

i have a project where i do ~13000 requests to an API which is rate limited (1200 requests per minute).

I am using aiolimiter to limit the requests.

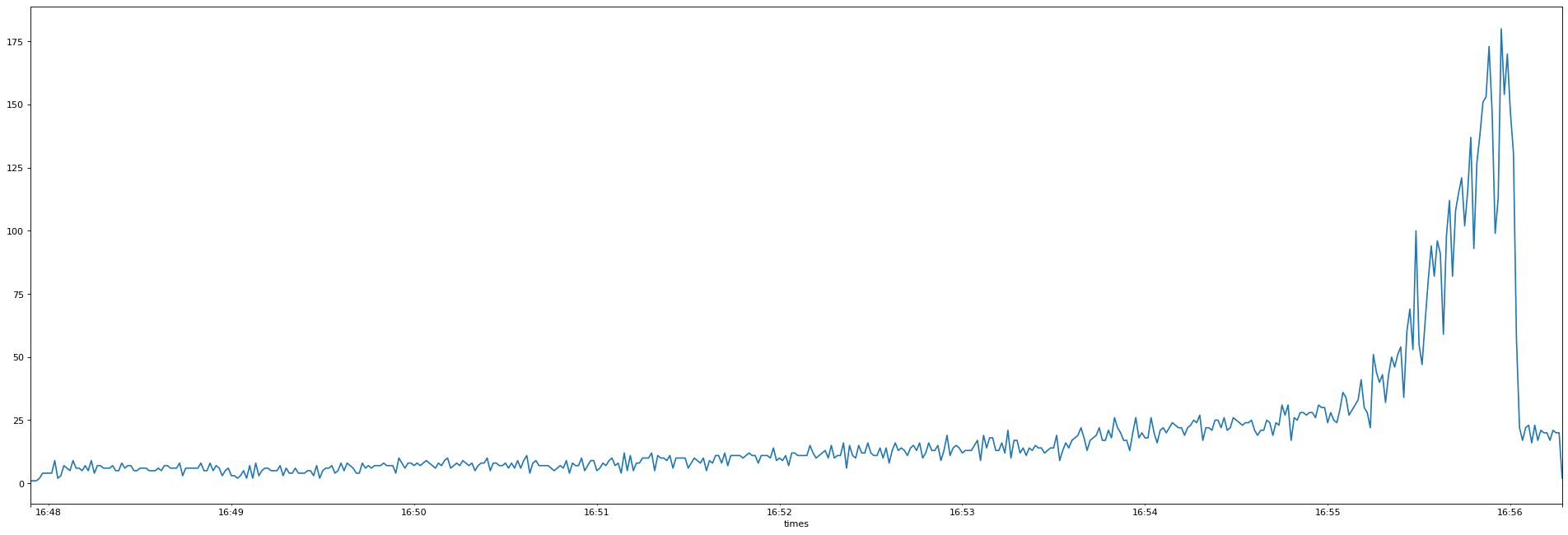

I noticed that httpx starts very slow (< 5 rps) then, near the end shoots up over the limit (20 rps) and has a tiny section in the end where it actually does 20 rps. See the graph below:

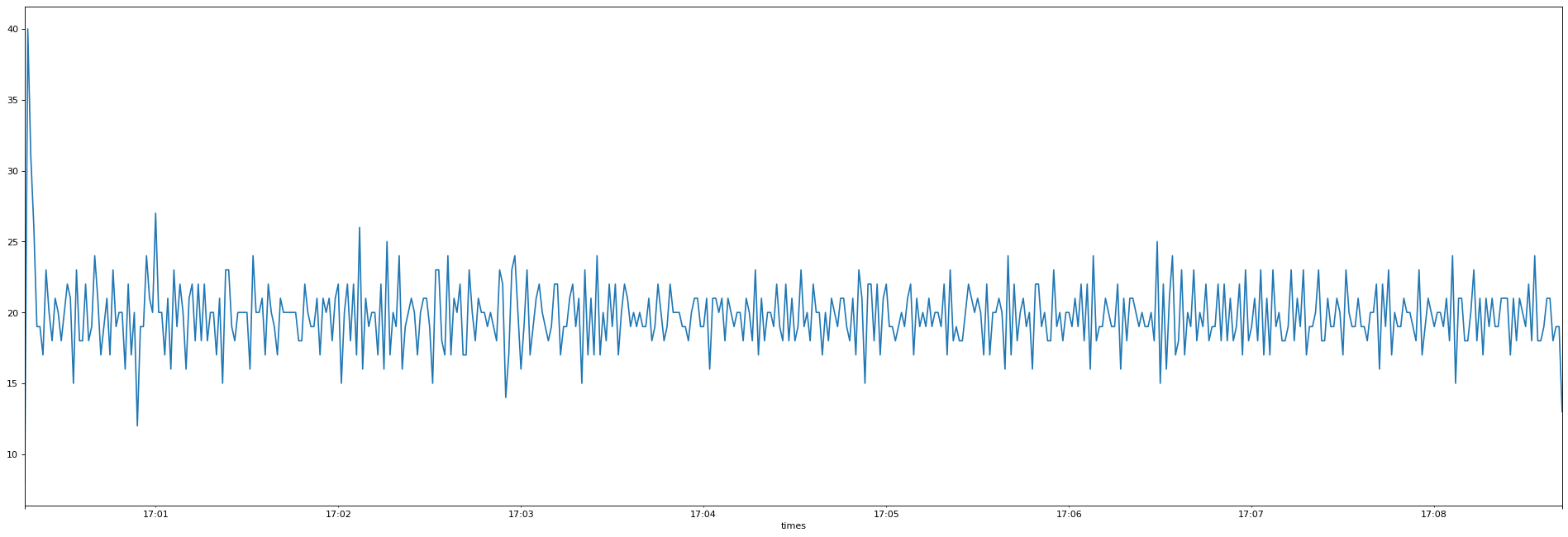

When i switch out the client with aiohttp, it works as expected:

I prepared a minimal example to test locally with a sanic server:

And a client with httpx and aiohttp:

As you can see, there is a BoundedSemaphore

async with sema:commented out.If i enable the semaphore in the httpx example the graph is more similar to the one from aiohttp.

Is this an issue with httpx or am i doing something wrong here which aiohttp deals with.

Versions used:

Any help is appreciated.

Beta Was this translation helpful? Give feedback.

All reactions