aequitas is an open-source bias auditing and Fair ML toolkit for data scientists, machine learning researchers, and policymakers. We provide an easy-to-use and transparent tool for auditing predictors of ML models, as well as experimenting with "correcting biased model" using Fair ML methods in binary classification settings.

For more context around dealing with bias and fairness issues in AI//ML systems, take a look at our detailed tutorial and related publications.

Version 1.0.0: Aequitas Flow - Optimizing Fairness in ML Pipelines

Explore Aequitas Flow, our latest update in version 1.0.0, designed to augment bias audits with bias mitigation and allow enrich experimentation with Fair ML methods using our new, streamlined capabilities.

pip install aequitasor

pip install git+https://github.com/dssg/aequitas.git| Notebook | Description |

|---|---|

| Audit a Model's Predictions | Check how to do an in-depth bias audit with the COMPAS example notebook or use your own data. |

| Correct a Model's Predictions | Create a dataframe to audit a specific model, and correct the predictions with group-specific thresholds in the Model correction notebook. |

| Train a Model with Fairness Considerations | Experiment with your own dataset or methods and check the results of a Fair ML experiment. |

| Add your method to Aequitas Flow | Learn how to add your own method to the Aequitas Flow toolkit. |

To perform a bias audit, you need a pandas DataFrame with the following format:

| label | score | sens_attr_1 | sens_attr_2 | ... | sens_attr_N | |

|---|---|---|---|---|---|---|

| 0 | 0 | 0 | A | F | Y | |

| 1 | 0 | 1 | C | F | N | |

| 2 | 1 | 1 | B | T | N | |

| ... | ||||||

| N | 1 | 0 | E | T | Y |

where label is the target variable for your prediction task and score is the model output.

Only one sensitive attribute is required; all must be in Categorical format.

from aequitas import Audit

audit = Audit(df)To obtain a summary of the bias audit, run:

# Select the fairness metric of interest for your dataset

audit.summary_plot(["tpr", "fpr", "pprev"])We can also observe a single metric and sensitive attribute:

audit.disparity_plot(attribute="sens_attr_2", metrics=["fpr"])To perform an experiment, a dataset is required. It must have a label column, a sensitive attribute column, and features.

from aequitas.flow import DefaultExperiment

experiment = DefaultExperiment.from_pandas(dataset, target_feature="label", sensitive_feature="attr", experiment_size="small")

experiment.run()

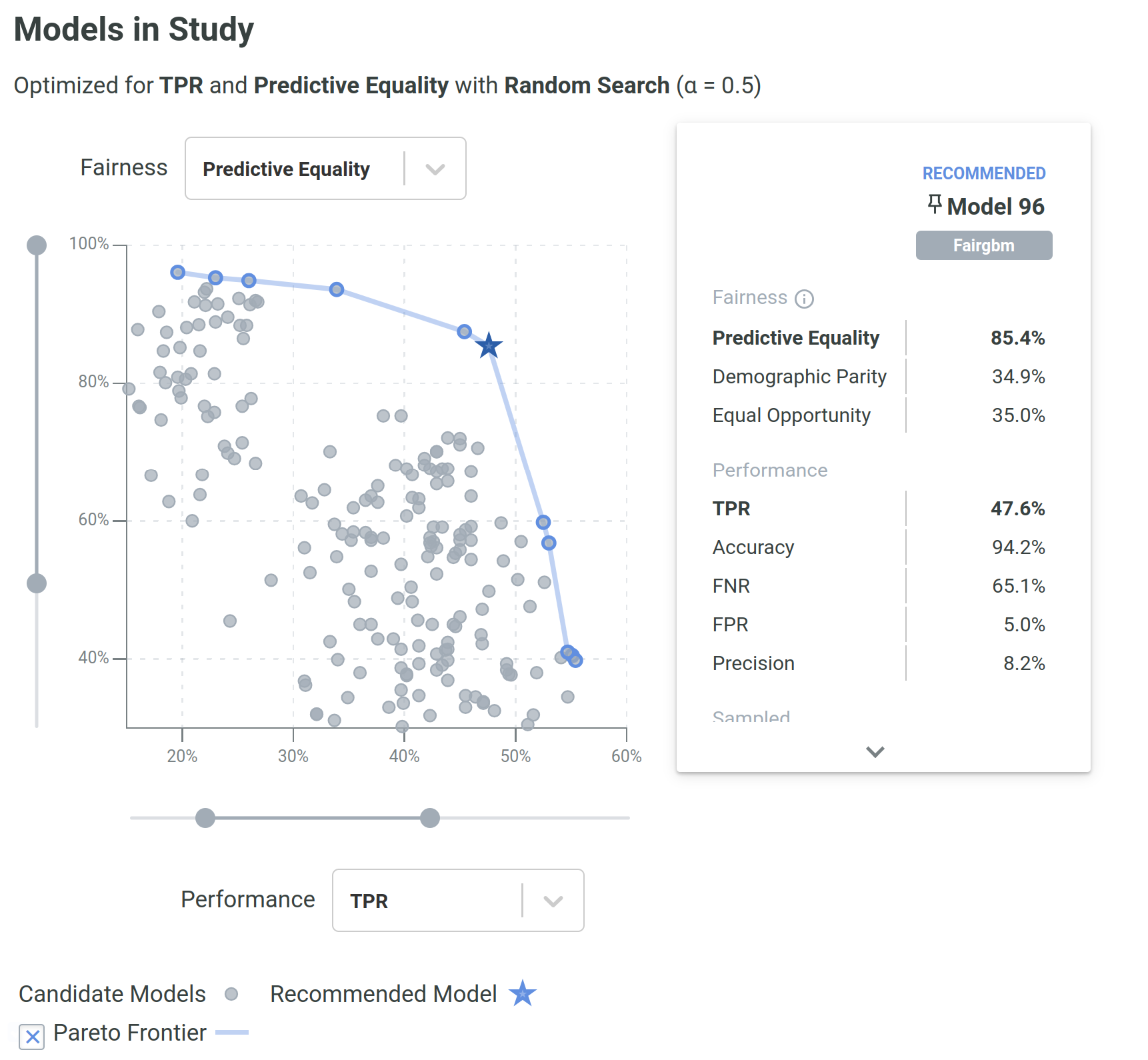

experiment.plot_pareto()The DefaultExperiment class allows for an easier entry-point to experiments in the package. This class has two main parameters to configure the experiment: experiment_size and methods. The former defines the size of the experiment, which can be either test (1 model per method), small (10 models per method), medium (50 models per method), or large (100 models per method). The latter defines the methods to be used in the experiment, which can be either all or a subset, namely preprocessing or inprocessing.

Several aspects of an experiment (e.g., algorithms, number of runs, dataset splitting) can be configured individually in more granular detail in the Experiment class.

Assuming an aequitas.flow.Dataset, it is possible to train methods and use their functionality depending on the type of algorithm (pre-, in-, or post-processing).

For pre-processing methods:

from aequitas.flow.methods.preprocessing import PrevalenceSampling

sampler = PrevalenceSampling()

sampler.fit(dataset.train.X, dataset.train.y, dataset.train.s)

X_sample, y_sample, s_sample = sampler.transform(dataset.train.X, dataset.train.y, dataset.train.s)for in-processing methods:

from aequitas.flow.methods.inprocessing import FairGBM

model = FairGBM()

model.fit(X_sample, y_sample, s_sample)

scores_val = model.predict_proba(dataset.validation.X, dataset.validation.y, dataset.validation.s)

scores_test = model.predict_proba(dataset.test.X, dataset.test.y, dataset.test.s)for post-processing methods:

from aequitas.flow.methods.postprocessing import BalancedGroupThreshold

threshold = BalancedGroupThreshold("top_pct", 0.1, "fpr")

threshold.fit(dataset.validation.X, scores_val, dataset.validation.y, dataset.validation.s)

corrected_scores = threshold.transform(dataset.test.X, scores_test, dataset.test.s)With this sequence, we would sample a dataset, train a FairGBM model, and then adjust the scores to have equal FPR per group (achieving Predictive Equality).

- Metrics: Audits based on confusion matrix-based metrics with flexibility to select the more important ones depending on use-case.

- Plotting options: The major outcomes of bias auditing and experimenting offer also plots adequate to different user objectives.

- Fair ML methods: Interface and implementation of several Fair ML methods, including pre-, in-, and post-processing methods.

- Datasets: Two "families" of datasets included, named BankAccountFraud and FolkTables.

- Extensibility: Adapted to receive user-implemented methods, with intuitive interfaces and method signatures.

- Reproducibility: Option to save artifacts of Experiments, from the transformed data to the fitted models and predictions.

- Modularity: Fair ML Methods and default datasets can be used individually or integrated in an

Experiment. - Hyperparameter optimization: Out of the box integration and abstraction of Optuna's hyperparameter optimization capabilities for experimentation.

We support a range of methods designed to address bias and discrimination in different stages of the ML pipeline.

| Type | Method | Description |

|---|---|---|

| Pre-processing | Data Repairer | Transforms the data distribution so that a given feature distribution is marginally independent of the sensitive attribute, s. |

| Label Flipping | Flips the labels of a fraction of the training data according to the Fair Ordering-Based Noise Correction method. | |

| Prevalence Sampling | Generates a training sample with controllable balanced prevalence for the groups in dataset, either by undersampling or oversampling. | |

| Massaging | Flips selected labels to reduce prevalence disparity between groups. | |

| Correlation Suppression | Removes features that are highly correlated with the sensitive attribute. | |

| Feature Importance Suppression | Iterively removes the most important features with respect to the sensitive attribute. | |

| In-processing | FairGBM | Novel method where a boosting trees algorithm (LightGBM) is subject to pre-defined fairness constraints. |

| Fairlearn Classifier | Models from the Fairlearn reductions package. Possible parameterization for ExponentiatedGradient and GridSearch methods. | |

| Post-processing | Group Threshold | Adjusts the threshold per group to obtain a certain fairness criterion (e.g., all groups with 10% FPR) |

| Balanced Group Threshold | Adjusts the threshold per group to obtain a certain fairness criterion, while satisfying a global constraint (e.g., Demographic Parity with a global FPR of 10%) | |

aequitas provides the value of confusion matrix metrics for each possible value of the sensitive attribute columns To calculate fairness metrics. The cells of the confusion metrics are:

| Cell | Symbol | Description |

|---|---|---|

| False Positive | The number of entities of the group with |

|

| False Negative | The number of entities of the group with |

|

| True Positive | The number of entities of the group with |

|

| True Negative | The number of entities of the group with |

From these, we calculate several metrics:

| Metric | Formula | Description |

|---|---|---|

| Accuracy | The fraction of correctly predicted entities withing the group. | |

| True Positive Rate | The fraction of true positives within the label positive entities of a group. | |

| True Negative Rate | The fraction of true negatives within the label negative entities of a group. | |

| False Negative Rate | The fraction of false negatives within the label positive entities of a group. | |

| False Positive Rate | The fraction of false positives within the label negative entities of a group. | |

| Precision | The fraction of true positives within the predicted positive entities of a group. | |

| Negative Predictive Value | The fraction of true negatives within the predicted negative entities of a group. | |

| False Discovery Rate | The fraction of false positives within the predicted positive entities of a group. | |

| False Omission Rate | The fraction of false negatives within the predicted negative entities of a group. | |

| Predicted Positive | The number of entities within a group where the decision is positive, i.e., |

|

| Total Predictive Positive | The total number of entities predicted positive across groups defined by |

|

| Predicted Negative | The number of entities within a group where the decision is negative, i.e., |

|

| Predicted Prevalence | The fraction of entities within a group which were predicted as positive. | |

| Predicted Positive Rate | The fraction of the entities predicted as positive that belong to a certain group. |

These are implemented in the Group class. With the Bias class, several fairness metrics can be derived by different combinations of ratios of these metrics.

You can find the toolkit documentation here.

For more examples of the python library and a deep dive into concepts of fairness in ML, see our Tutorial presented on KDD and AAAI. Visit also the Aequitas project website.

If you use Aequitas in a scientific publication, we would appreciate citations to the following paper:

Pedro Saleiro, Benedict Kuester, Abby Stevens, Ari Anisfeld, Loren Hinkson, Jesse London, Rayid Ghani, Aequitas: A Bias and Fairness Audit Toolkit, arXiv preprint arXiv:1811.05577 (2018). (PDF)

@article{2018aequitas,

title={Aequitas: A Bias and Fairness Audit Toolkit},

author={Saleiro, Pedro and Kuester, Benedict and Stevens, Abby and Anisfeld, Ari and Hinkson, Loren and London, Jesse and Ghani, Rayid}, journal={arXiv preprint arXiv:1811.05577}, year={2018}}